Why Your Stadium WiFi Grinds to a Halt (And How to Fix It)

This authoritative technical guide examines the root cause of stadium WiFi congestion — the simultaneous background chatter of 50,000 devices loading programmatic advertisements and telemetry — and provides a detailed architectural blueprint for deploying edge DNS filtering as the primary mitigation strategy. Designed for IT Directors, CTOs, and Network Architects, it delivers actionable implementation guidance, real-world case studies, and measurable ROI frameworks to help venue operators reclaim bandwidth and deliver high-performance connectivity at scale.

Listen to this guide

View podcast transcript

- Executive Summary

- Technical Deep-Dive: The Anatomy of High-Density Congestion

- The Background Traffic Avalanche

- Three Failure Modes at Scale

- Implementation Guide: Edge DNS Filtering Architecture

- Architectural Blueprint

- Deployment Steps

- Case Studies

- Case Study 1: 60,000-Seat Football Stadium, UK

- Case Study 2: International Conference Centre, [Hospitality](/industries/hospitality) Sector

- Best Practices & Standards

- Troubleshooting & Risk Mitigation

- False Positives

- Captive Portal Bypass via Background Traffic

- DoH Bypass

- Offline Map and Navigation Services

- ROI & Business Impact

- Listen to the Technical Briefing

Executive Summary

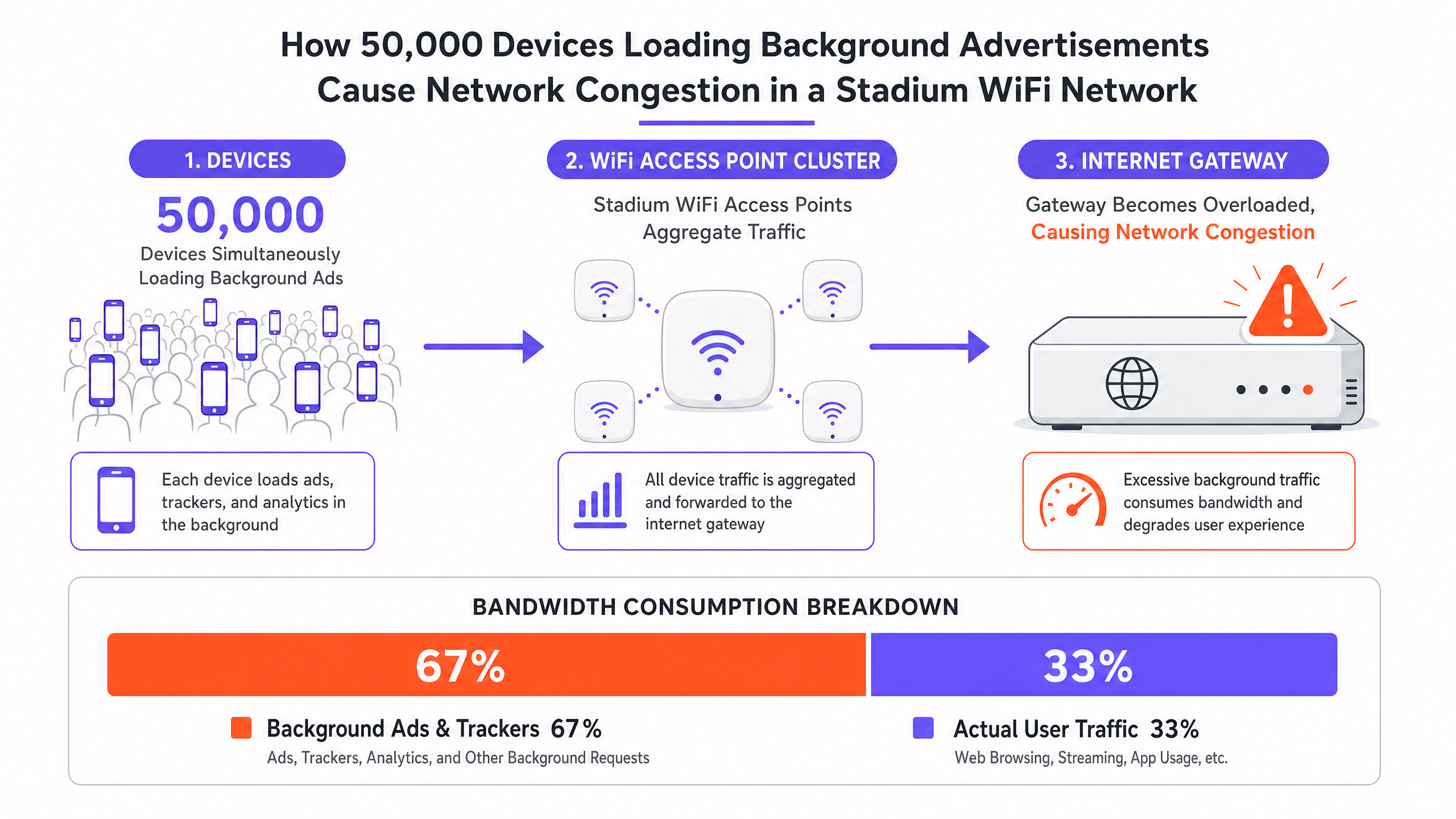

For CTOs and IT Directors managing high-density venues, the phenomenon of stadium WiFi slow is a persistent and costly operational risk. Despite significant capital expenditure on multi-gigabit backhaul, high-density access points, and meticulous RF planning, networks frequently grind to a halt when venue capacity exceeds 80%. The root cause is rarely a hardware limitation. It is the invisible avalanche of background traffic. When 50,000 devices simultaneously connect to a Guest WiFi network, they initiate millions of micro-transactions — loading programmatic advertisements, syncing telemetry, and executing background SDK calls. This "chatter" can consume up to 60% of available bandwidth before a single user actively browses the web, exhausting NAT pools and saturating airtime. This guide details the technical mechanics of this congestion, provides a vendor-neutral architectural blueprint for implementing edge DNS filtering, and quantifies the ROI of doing so.

Technical Deep-Dive: The Anatomy of High-Density Congestion

The Background Traffic Avalanche

When a device associates with a guest WiFi network, it immediately begins a cascade of background activity that has nothing to do with what the user is actively doing. Modern mobile applications are embedded with numerous third-party SDKs — for analytics platforms, crash reporting services, and programmatic ad networks. Each SDK operates independently, polling its own servers on its own schedule. In a stadium environment, 50,000 devices performing these actions simultaneously creates a traffic profile that is fundamentally different from any other deployment scenario.

This traffic is characterised by high volume, low-payload requests: small-packet TCP handshakes, DNS queries, and HTTP GET requests for tracking pixels and ad creatives. While the total data transferred per device may seem negligible in isolation, the aggregate effect on the network's spectral efficiency is devastating. The IEEE 802.11 standard dictates that WiFi is a shared medium; every packet transmitted by any device must contend for airtime. Millions of background micro-transactions saturate this shared medium, leaving insufficient airtime for legitimate user sessions.

Three Failure Modes at Scale

High-density congestion typically manifests through three distinct failure modes, which often occur simultaneously:

| Failure Mode | Technical Cause | User-Perceived Symptom |

|---|---|---|

| State Table Exhaustion | Firewall/NAT gateway runs out of connection tracking memory | Dropped packets, connection timeouts, captive portal failures |

| Airtime Saturation | Shared RF medium overwhelmed by background micro-transactions | High latency, poor throughput despite low AP client counts |

| DNS Resolver Overload | Local resolvers overwhelmed by ad network and telemetry queries | Slow page loads, app failures, authentication delays |

State Table Exhaustion is the most insidious of these. A typical enterprise firewall may be sized to handle 500,000 to 1,000,000 concurrent connection states. In a 50,000-device stadium, with each device maintaining 20 to 30 background connections, the theoretical connection state count exceeds one million before accounting for any active user traffic. The result is dropped packets and failed connections across the board, affecting every user regardless of their own behaviour.

Airtime Saturation is compounded by the 802.11 contention mechanism (CSMA/CA). Every device must listen before transmitting, and the probability of collision increases exponentially with device density. Background traffic from ad networks and telemetry services forces legitimate user traffic to queue, increasing latency and reducing effective throughput far below the theoretical capacity of the access points.

DNS Resolver Overload is often overlooked. In a typical stadium deployment, WiFi Analytics reveals that ad network domains — such as those operated by major programmatic advertising platforms — consistently appear in the top five most-queried DNS entries. Each query, while individually small, contributes to the aggregate load on local resolvers and triggers downstream TCP connection attempts that further burden the state table.

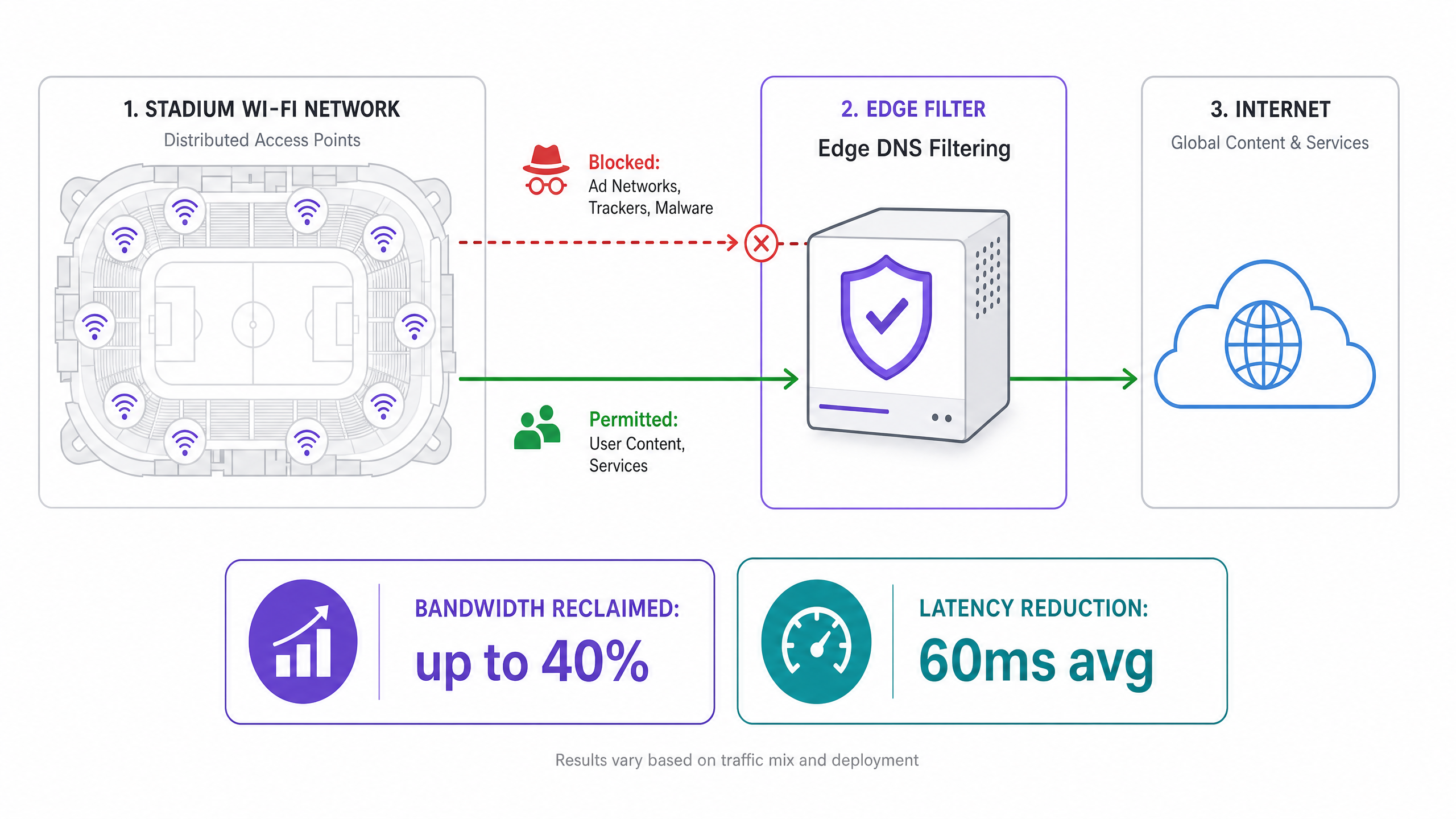

Implementation Guide: Edge DNS Filtering Architecture

The strategic response to this failure pattern is not to provision more hardware, but to eliminate the source of the noise. Edge DNS Filtering is the primary mitigation strategy, and when correctly deployed, it can reclaim up to 40% of WAN bandwidth and reduce average latency by 60ms or more.

Architectural Blueprint

Edge DNS filtering operates by intercepting DNS queries at the network perimeter. When a device requests the IP address of a known ad network, telemetry server, or malware domain, the filter responds with a null route — either returning 0.0.0.0 or an NXDOMAIN response. This prevents the device from establishing a TCP connection, eliminating the associated state-table overhead, airtime consumption, and WAN bandwidth utilisation.

Deployment Steps

Step 1: Deploy Local DNS Resolvers Implement highly available local DNS resolvers at the venue edge. These must be capable of handling the full query load of the connected device population. Do not rely solely on upstream ISP resolvers, as this introduces latency and removes your ability to filter.

Step 2: Integrate Threat Intelligence and Ad-Blocking Feeds Subscribe to enterprise-grade threat intelligence feeds that include known ad network domains, telemetry servers, and malware infrastructure. These feeds must be updated dynamically — ideally every few hours — to catch newly registered domains used by ad networks to evade blocking.

Step 3: Configure DHCP Policy Configure DHCP servers to distribute the IP addresses of the local, filtered resolvers to all guest devices. This is the primary enforcement mechanism for directing client DNS traffic through the filter.

Step 4: Implement Egress Firewall Rules This step is critical and frequently omitted. Implement strict egress firewall rules to block all outbound DNS traffic (TCP/UDP Port 53) to any destination other than the approved local resolvers. This prevents devices with hardcoded DNS settings from bypassing the filter.

Step 5: Address DNS over HTTPS (DoH) As detailed in our guide on DNS Over HTTPS (DoH): Implications for Public WiFi Filtering , modern operating systems and browsers increasingly use DoH to encrypt DNS queries, routing them to external resolvers and bypassing local filtering entirely. Network administrators must explicitly block the IP addresses of known DoH providers at the firewall level. This forces the client to fall back to standard, unencrypted DNS, which can then be filtered. The Portuguese-language equivalent of this guidance is available at DNS Over HTTPS (DoH): Implicações para a Filtragem de WiFi Público for international deployments.

Step 6: Integrate with Identity and Access Management For maximum effectiveness, link DNS filtering policies to user authentication. Leveraging profile-based authentication — as explored in our 2026 guide on passwordless access — allows venues to apply differentiated filtering policies based on user roles. General admission users receive aggressive filtering; press, corporate, or VIP users may receive more permissive policies that permit specific business applications.

Case Studies

Case Study 1: 60,000-Seat Football Stadium, UK

A Premier League football club was experiencing severe network degradation during half-time, with the captive portal timing out and social media sharing failing at peak moments. The WAN circuit was a 10Gbps dedicated connection, operating at only 28% utilisation during the incident. The firewall state table, however, was at 97% capacity.

Following a traffic audit using WiFi Analytics , the team identified that ad network domains accounted for 61% of all DNS queries. The top five domains were all programmatic advertising infrastructure. Edge DNS filtering was deployed with a blocklist of 1.2 million domains, combined with strict egress rules blocking Port 53 and DoH provider IPs.

The outcome: state table utilisation dropped to 34% at peak capacity, average latency fell from 280ms to 95ms, and WAN bandwidth utilisation at peak dropped from 28% to 17% — a 39% reduction in consumed bandwidth despite no change in the number of connected devices.

Case Study 2: International Conference Centre, Hospitality Sector

A major conference centre hosting a 15,000-delegate technology summit was experiencing complaints from attendees about slow WiFi despite a recently upgraded infrastructure. The venue had deployed 400 enterprise-grade access points and a 5Gbps WAN circuit.

Traffic analysis revealed that delegate devices — predominantly corporate laptops with multiple enterprise applications running — were generating an average of 45 background connections per device. The DNS resolver was processing 2.3 million queries per hour, with 68% destined for ad networks and analytics platforms.

Following edge DNS filtering deployment with policy integration tied to the conference registration system, the venue saw a 52% reduction in DNS query volume, a 41% reduction in firewall state table utilisation, and a measured improvement in average TCP connection establishment time from 180ms to 62ms. Delegate satisfaction scores for WiFi quality increased from 3.1 to 4.6 out of 5.

Best Practices & Standards

The following vendor-neutral best practices reflect current industry standards for high-density WiFi deployments:

- IEEE 802.11ax (Wi-Fi 6/6E): Deploy Wi-Fi 6 or 6E access points. The OFDMA and BSS Colouring features significantly reduce airtime contention in high-density environments, complementing the traffic reduction achieved by DNS filtering.

- WPA3-Enterprise: Enforce WPA3-Enterprise with IEEE 802.1X authentication for any deployment handling sensitive data. This is a baseline requirement for PCI DSS compliance in Retail environments and aligns with GDPR data minimisation principles.

- GDPR Compliance: Transparently communicate the use of network optimisation tools, including DNS filtering, in the captive portal terms of service. Users must be informed that DNS queries are processed locally as part of the network management function.

- Monitoring and Analytics: Continuously monitor the top requested domains using WiFi Analytics and adjust filtering policies accordingly. Ad networks regularly register new domains to evade blocking; static blocklists become stale within days.

- Public Sector Deployments: For public sector and smart city WiFi deployments, as discussed in the context of Purple's public sector expansion , DNS filtering also serves a safeguarding function, blocking access to harmful content categories in compliance with local authority requirements.

Troubleshooting & Risk Mitigation

False Positives

Risk: Overly aggressive filtering may block legitimate application functionality, such as ticketing apps, venue navigation services, or corporate VPN endpoints.

Mitigation: Implement a strict allowlist for mission-critical domains identified during a monitor-only baseline phase. Never go directly to enforcement mode in a production environment. A two-week monitoring period is the minimum recommended baseline before enforcement.

Captive Portal Bypass via Background Traffic

Risk: Devices may fail to trigger the captive portal if background traffic satisfies the OS's captive portal detection mechanism (e.g., Apple's captive.apple.com check) before the user opens a browser.

Mitigation: Tighten the walled garden to permit only the specific domains required for captive portal detection and authentication. All other traffic must be blocked until the user is fully authenticated and the filtering policy is applied to their session.

DoH Bypass

Risk: Devices using DoH will bypass local DNS filtering, rendering the entire strategy ineffective for those clients.

Mitigation: Maintain an up-to-date blocklist of DoH provider IP addresses and block them at the firewall. This is not a one-time configuration; new DoH providers emerge regularly and must be tracked.

Offline Map and Navigation Services

For venues deploying indoor navigation alongside WiFi — such as those using Purple's Offline Maps Mode — ensure that the map tile servers and navigation APIs are explicitly allowlisted. These services are critical to the user experience and must not be caught by broad ad-network filtering rules.

ROI & Business Impact

The business case for edge DNS filtering is compelling across multiple dimensions:

| Metric | Typical Outcome | Business Impact |

|---|---|---|

| WAN Bandwidth Reduction | 30–40% | Deferred circuit upgrade costs; extended infrastructure lifecycle |

| Latency Reduction | 40–70ms average | Higher user engagement with venue apps and digital services |

| State Table Utilisation | 50–65% reduction at peak | Deferred firewall hardware refresh; reduced incident risk |

| DNS Query Volume | 40–60% reduction | Reduced resolver load; improved authentication speed |

| User Satisfaction | Measurable NPS improvement | Higher dwell time, increased F&B spend, improved brand perception |

For a stadium spending £80,000 per year on WAN connectivity and facing a £200,000 hardware refresh cycle, a 35% bandwidth reduction translates to approximately £28,000 in annual WAN savings and a potential 18-month extension of the hardware refresh cycle — a combined three-year saving exceeding £100,000, against an implementation cost typically in the range of £15,000 to £30,000 for a venue of this scale.

Listen to the Technical Briefing

Key Definitions

State Table Exhaustion

A condition where a firewall or NAT gateway runs out of memory allocated for tracking active network connections, causing it to drop new connection requests.

Occurs in high-density venues when tens of thousands of devices simultaneously initiate micro-connections to ad networks and telemetry servers. The primary cause of the 'stadium WiFi slow' paradox where the WAN circuit appears underutilised but the network is effectively broken.

Airtime Utilisation

The percentage of time the RF spectrum on a given WiFi channel is actively being used to transmit data or management frames.

High airtime utilisation from background chatter reduces the capacity available for active user sessions. In a high-density stadium, background traffic can drive airtime utilisation above 80%, leaving insufficient capacity for legitimate user traffic.

Edge DNS Filtering

The practice of intercepting DNS queries at the network perimeter and blocking resolution for known malicious, high-overhead, or policy-violating domains by returning a null route or NXDOMAIN response.

The primary architectural mitigation for background traffic congestion in high-density venues. Prevents devices from establishing connections to ad networks and telemetry servers, reclaiming bandwidth and reducing state table load.

DNS over HTTPS (DoH)

A protocol for performing DNS resolution via the HTTPS protocol, encrypting the DNS query and routing it to an external resolver, bypassing local DNS infrastructure.

The primary bypass mechanism for edge DNS filtering. Must be explicitly blocked at the IP level to ensure all DNS traffic passes through the local, filtered resolver.

Null Route

A network route that discards traffic destined for a specific IP address or domain, effectively dropping it without forwarding.

Used by DNS filters to respond to blocked domains — returning 0.0.0.0 or NXDOMAIN — preventing the client from initiating a TCP connection and eliminating the associated network overhead.

Walled Garden

A restricted network environment that limits device access to a predefined set of resources, typically used to enforce captive portal authentication before granting full internet access.

Must be strictly configured to prevent background traffic from satisfying OS captive portal detection mechanisms before the user authenticates, which would allow unrestricted background traffic to flow without a filtering policy being applied.

Profile-Based Authentication

An authentication method that dynamically applies specific network policies — including DNS filtering rules, bandwidth limits, and access controls — based on the authenticated user's identity or role.

Enables venues to offer differentiated network experiences, applying aggressive filtering to general admission users while providing more permissive policies to VIPs, press, or corporate guests.

OFDMA (Orthogonal Frequency Division Multiple Access)

A multi-user version of OFDM that allows a single WiFi 6 (802.11ax) transmission to be split across multiple users simultaneously, reducing contention and improving spectral efficiency.

A key feature of Wi-Fi 6 that directly addresses airtime contention in high-density deployments. Works in conjunction with DNS filtering to maximise the usable capacity of each access point.

Spectral Efficiency

The amount of useful data that can be transmitted over a given bandwidth in a specific communication system.

Reduced by background micro-transactions that consume airtime without delivering value to end users. Edge filtering and Wi-Fi 6 features like OFDMA work together to maximise spectral efficiency.

Worked Examples

A 50,000-seat stadium is experiencing severe network degradation during halftime. The IT team has verified that the 10Gbps WAN circuit is only at 30% utilisation, but APs are reporting high airtime utilisation and the firewall state table is at 95% capacity. Adding more APs has not improved performance.

The issue is not raw bandwidth or AP density, but connection state exhaustion caused by background application chatter. The solution requires deploying an Edge DNS Filter in a phased approach. Phase 1: Deploy local DNS resolvers and configure them in monitor-only mode for two weeks. Analyse the top 100 queried domains. Phase 2: Configure DHCP to point all guest clients to the local resolvers. Implement egress firewall rules blocking outbound TCP/UDP Port 53 to all external IPs. Phase 3: Block the IP addresses of known DoH providers (Cloudflare 1.1.1.1, Google 8.8.8.8, etc.) at the firewall. Phase 4: Activate enforcement mode on the DNS filter with a blocklist targeting the identified ad network and telemetry domains. Phase 5: Monitor state table utilisation and airtime metrics over the next three events to validate the improvement.

A major transport hub wants to implement DNS filtering across 12 terminal buildings to improve network performance for 80,000 daily passengers. They are concerned about breaking legitimate airline ticketing applications and airport operations systems.

Implement a centralised, cloud-managed DNS filtering platform with local forwarders at each terminal. Phase 1: Deploy local forwarders in all 12 terminals, pointing to a centralised management plane. Phase 2: Run in monitor-only mode for 30 days across all terminals simultaneously. Use the analytics to build a comprehensive allowlist of airline ticketing domains, airport operations APIs, and ground handling system endpoints. Phase 3: Segment the network into guest WiFi and operational technology (OT) VLANs. Apply aggressive filtering to guest WiFi; apply a strict allowlist-only policy to OT VLANs. Phase 4: Enforce filtering on guest WiFi. Phase 5: Implement automated allowlist management — when a new airline begins operations at the terminal, their domain requirements are added to the allowlist via a change management process.

Practice Questions

Q1. You have deployed an Edge DNS filter and configured DHCP to point all clients to the local resolver. After the first major event, you find that bandwidth utilisation has only dropped by 5%, and traffic analysis shows many devices are still successfully resolving ad network domains. What is the most likely architectural oversight, and what is the remediation?

Hint: Consider how modern browsers and operating systems handle DNS resolution by default, and what happens when a device has a hardcoded DNS server configured.

View model answer

There are two likely causes. First, the network is failing to block DNS over HTTPS (DoH) traffic. Modern browsers will attempt to use DoH, routing encrypted DNS queries to external resolvers like Cloudflare or Google, bypassing the local filter entirely. The remediation is to implement egress firewall rules blocking the IP addresses of known DoH providers. Second, some devices may have hardcoded DNS server addresses (e.g., 8.8.8.8) in their network configuration, bypassing DHCP-assigned resolvers. The remediation is to implement egress firewall rules blocking all outbound TCP/UDP Port 53 traffic to any destination other than the local resolvers, forcing all DNS traffic through the filter regardless of client configuration.

Q2. During a major event, the captive portal is timing out for users attempting to connect, even though the APs show relatively low client counts (only 40% of capacity). The WAN circuit is at 15% utilisation. What is the likely cause, and what architectural changes would prevent this at the next event?

Hint: Think about what happens to device traffic in the period between WiFi association and captive portal authentication, and what network resource is most likely to be exhausted.

View model answer

The firewall's state table is likely exhausted by background traffic from devices that have associated with the AP but not yet authenticated through the captive portal. In the unauthenticated state, if the walled garden is too permissive, background traffic flows freely, creating thousands of connection state entries per device. With 40% of 50,000 seats occupied (20,000 devices), even a brief window of unrestricted background traffic can exhaust the state table before users attempt to authenticate. The architectural remediation requires two changes: First, tighten the walled garden to permit only the minimum required traffic — DHCP (UDP 67/68), DNS to the local resolver only, and HTTP/HTTPS to the captive portal IP. Block all other traffic until authentication is complete. Second, consider deploying a dedicated stateless ACL at the AP or switch level to drop background traffic in the pre-authentication state, preventing it from even reaching the stateful firewall.

Q3. A retail chain with 500 locations wants to implement DNS filtering to improve POS system reliability and reduce WAN costs. They need uniform policy enforcement but also need to ensure that new point-of-sale software vendors can be onboarded without causing outages. What architectural approach should be taken, and what operational process should accompany it?

Hint: Consider the tension between centralised policy management and the operational agility needed to support a dynamic retail technology stack.

View model answer

Deploy a cloud-managed DNS filtering solution with local forwarders at each site. The centralised management plane allows for uniform policy definition and threat feed updates across all 500 locations simultaneously, while the local forwarders ensure low-latency resolution and resilience against WAN link degradation. For operational agility, implement a tiered allowlist management process: a permanent allowlist for core POS and payment processing domains (which should be treated as change-controlled infrastructure), a temporary allowlist for new vendor onboarding (with a 90-day review cycle), and a self-service request process for store managers to flag false positives. Critically, the PCI DSS requirement for network segmentation means the POS VLAN must be isolated from the guest WiFi VLAN, with separate filtering policies applied to each. The guest WiFi policy can be aggressive; the POS policy should be allowlist-only, permitting only explicitly approved payment processor and software update domains.