How to Manage Bandwidth on a WiFi Network

This authoritative guide provides IT managers, network architects, and CTOs with practical strategies for managing bandwidth on enterprise WiFi networks across high-density venues. It covers Quality of Service (QoS), traffic shaping, per-user rate limiting, and Deep Packet Inspection — the essential controls for running a fair, high-performance guest network. By integrating these techniques with Purple's guest WiFi and analytics platform, organisations can move from reactive firefighting to proactive, policy-driven network management.

🎧 Listen to this Guide

View Transcript

- Executive Summary

- Technical Deep-Dive

- Per-User Rate Limiting

- Quality of Service (QoS) and Traffic Prioritization

- Traffic Shaping and Deep Packet Inspection (DPI)

- SSID Segmentation and VLAN Architecture

- Implementation Guide

- Step 1: Define the Policy Framework

- Step 2: Configure Edge Enforcement

- Step 3: Dynamic Policy Assignment via RADIUS

- Step 4: Gateway Traffic Shaping

- Best Practices

- Troubleshooting & Risk Mitigation

- ROI & Business Impact

Executive Summary

For enterprise venues — whether a sprawling retail complex, a high-density stadium, or a 500-room hotel — unmanaged WiFi bandwidth is a significant operational risk. A single guest streaming 4K video or downloading large updates should never degrade the performance of point-of-sale systems, VoIP communications, or critical IoT infrastructure. Managing bandwidth on a WiFi network requires a multi-layered approach that moves beyond simple rate limiting to encompass Quality of Service (QoS), intelligent traffic shaping, and dynamic policy enforcement tied to user authentication.

This technical reference guide provides IT managers, network architects, and CTOs with actionable deployment strategies to control throughput, enforce airtime fairness, and prioritize critical applications. By implementing these vendor-neutral best practices and integrating them with Guest WiFi and WiFi Analytics platforms like Purple, organisations can transform their wireless infrastructure from an unmanaged utility into a high-performance, controlled asset that directly supports business operations.

Technical Deep-Dive

Bandwidth management in an enterprise wireless environment is fundamentally about enforcing fairness and protecting critical services. The architecture relies on several interconnected mechanisms operating at different layers of the network stack.

Per-User Rate Limiting

The first and most fundamental layer of defence is per-user rate limiting, typically enforced at the Access Point (AP) or the Wireless LAN Controller (WLC). By capping the maximum throughput for individual client devices — for example, 5 Mbps down / 2 Mbps up — network administrators prevent any single user from monopolising the available airtime. This is essential in environments like Hospitality , where hundreds of guests may connect simultaneously to a shared internet circuit.

Rate limiting is applied at the association level, meaning the policy is enforced as soon as a device joins the SSID. In more sophisticated deployments, the limit is returned dynamically by the RADIUS server as a Vendor-Specific Attribute (VSA) at the point of authentication, enabling per-user or per-group policies rather than a single blanket limit for all devices.

Quality of Service (QoS) and Traffic Prioritization

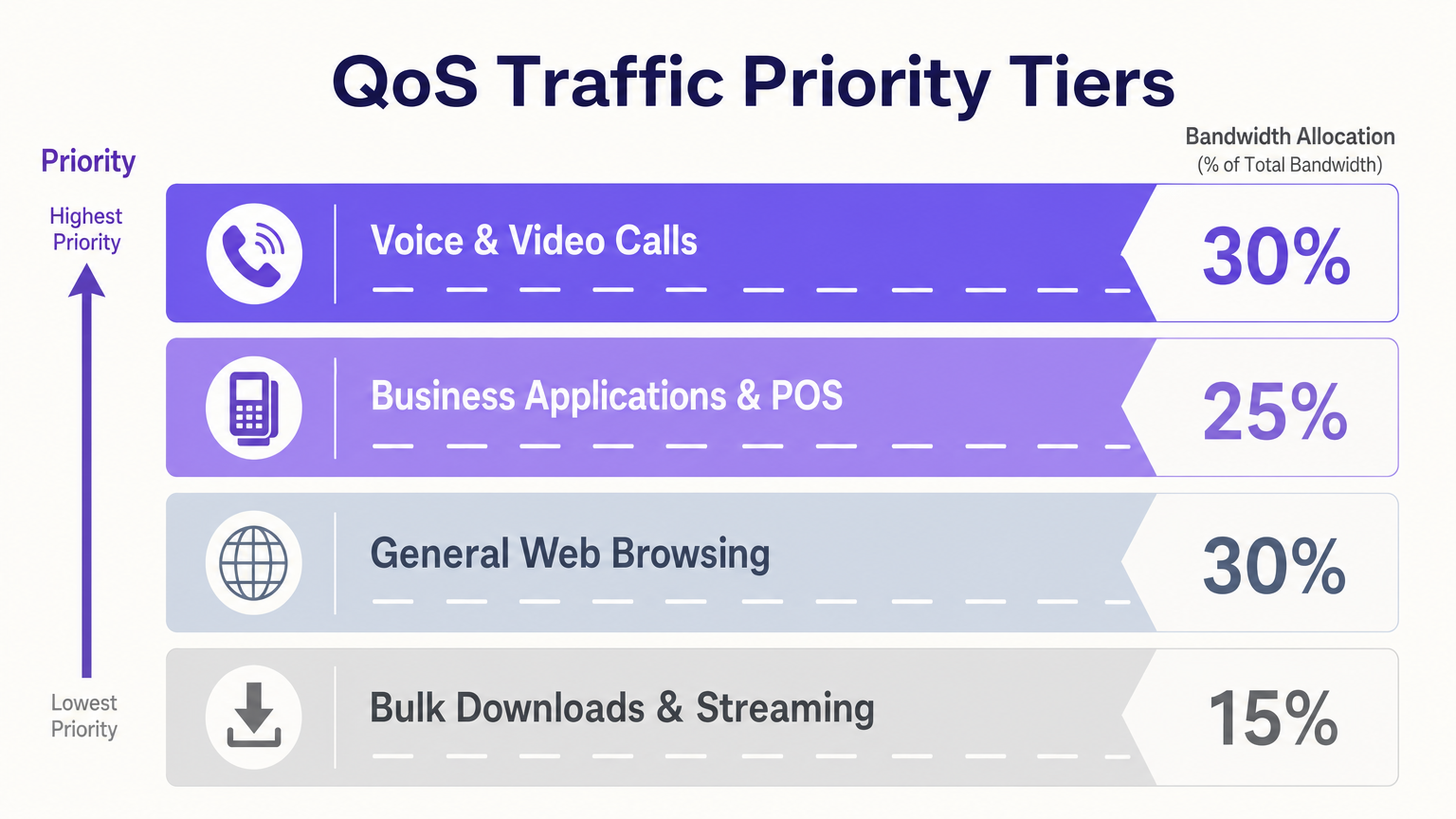

While rate limiting controls overall volume, QoS dictates the priority of different traffic types. In an enterprise setting, latency-sensitive applications such as Voice over IP (VoIP) and video conferencing must take precedence over general web browsing, which in turn must take precedence over bulk downloads.

This prioritization is achieved using Differentiated Services Code Point (DSCP) tagging at Layer 3, and IEEE 802.11e / Wi-Fi Multimedia (WMM) at Layer 2. When a packet enters the network, it is inspected and tagged with a DSCP value. The network switches and access points then use these tags to place packets into different hardware queues, ensuring that critical traffic is transmitted first during periods of congestion.

The four WMM access categories map to the following traffic types:

| WMM Access Category | DSCP Mapping | Typical Traffic |

|---|---|---|

| Voice (VO) | EF (46) | VoIP, push-to-talk |

| Video (VI) | AF41 (34) | Video conferencing, IPTV |

| Best Effort (BE) | Default (0) | Web browsing, email |

| Background (BK) | CS1 (8) | OS updates, P2P |

Traffic Shaping and Deep Packet Inspection (DPI)

Traffic shaping goes beyond simple prioritization by controlling the rate of specific application flows. Utilizing Deep Packet Inspection (DPI) at the firewall or gateway, administrators can identify the underlying application — such as Netflix, BitTorrent, or Microsoft Teams — regardless of the port or protocol used. This allows for granular policies, such as throttling streaming video to 2 Mbps to force standard definition playback, rather than blocking the service entirely.

Blocking services is almost always the wrong approach in a Retail or hospitality context. It leads to poor user experience, increased helpdesk tickets, and frustrated guests who will associate the negative experience with your brand.

SSID Segmentation and VLAN Architecture

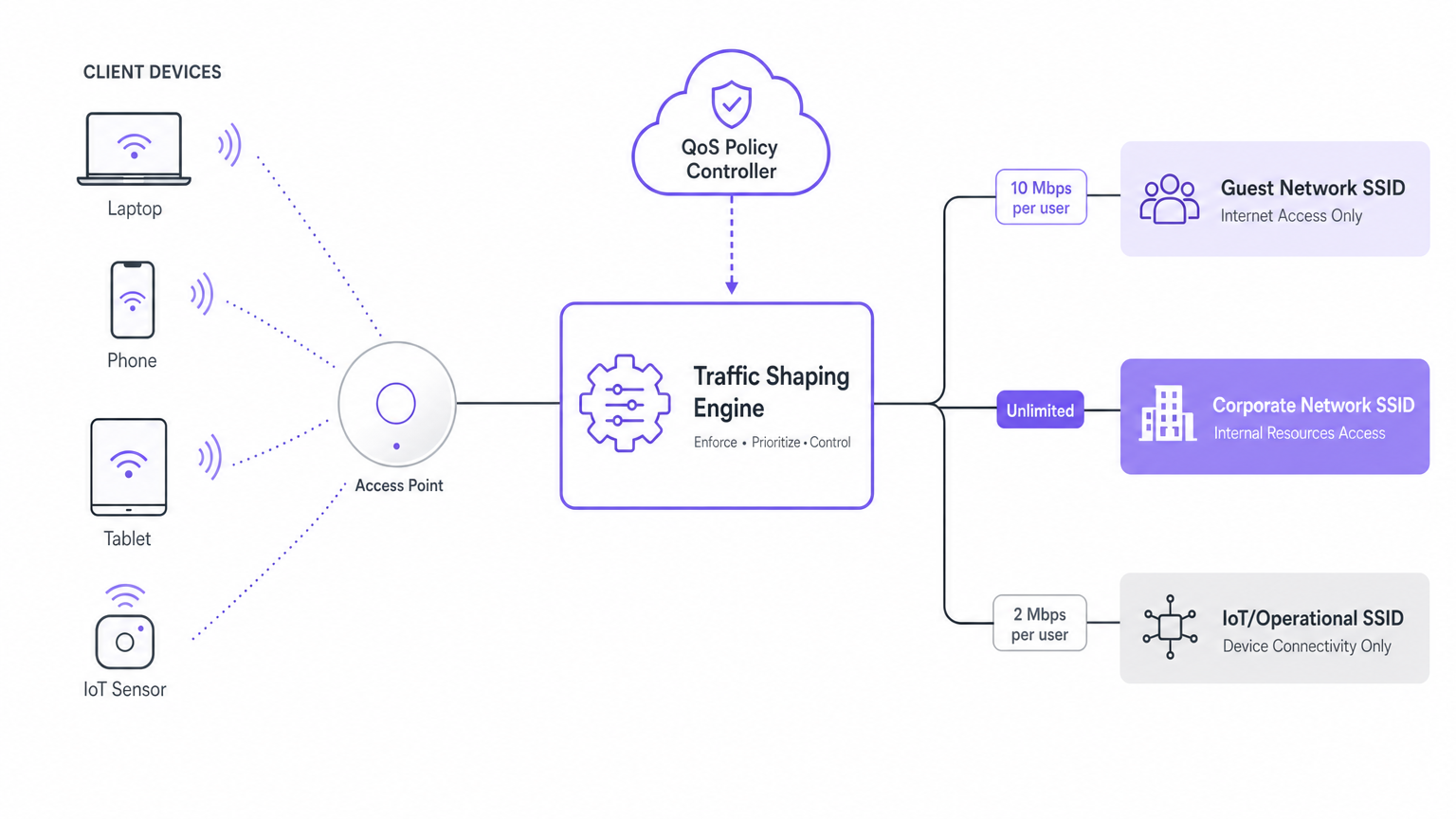

Effective bandwidth management begins with proper network segmentation. Deploying multiple SSIDs — each mapped to a dedicated VLAN — allows administrators to apply distinct policies to different user groups:

- Guest SSID: Internet-only access, per-user rate limits, DPI-based application throttling.

- Corporate SSID: Full internal network access, authenticated via IEEE 802.1X / WPA3-Enterprise, no rate limiting.

- IoT/Operational SSID: Restricted to specific application flows (e.g., MQTT for sensor data), low bandwidth allocation.

This segmentation is a prerequisite for compliance with standards such as PCI DSS, which requires that cardholder data environments be isolated from guest networks, and GDPR, which mandates appropriate technical controls to protect personal data.

Implementation Guide

Deploying effective bandwidth management requires a structured approach to policy design and enforcement. The following steps outline a standard deployment methodology for enterprise environments.

Step 1: Define the Policy Framework

Avoid over-complicating the QoS policy. Start with a simplified three-tier model:

- Critical Tier (High Priority): Operational technology, VoIP, POS systems, and internal corporate applications. Assign DSCP EF or AF41.

- Standard Tier (Medium Priority): General web browsing, email, and social media for guest access. Assign DSCP Default (0).

- Scavenger Tier (Low Priority): Background OS updates, bulk file transfers, and peer-to-peer traffic. Assign DSCP CS1.

Step 2: Configure Edge Enforcement

Implement per-user rate limiting at the AP level. For a typical retail environment offering free guest WiFi, a limit of 2–5 Mbps per user is generally sufficient for standard browsing and social media engagement without saturating the WAN link. For conference environments where users may need to run video calls, 10–15 Mbps per user is more appropriate, subject to the total WAN capacity.

Step 3: Dynamic Policy Assignment via RADIUS

For advanced deployments, integrate bandwidth policies with the authentication layer. When a user authenticates via a captive portal or 802.1X, the RADIUS server can return Vendor-Specific Attributes (VSAs) that dynamically assign the user to a specific bandwidth tier or VLAN based on their profile.

Using Purple's Guest WiFi platform, a loyalty programme member might receive a 20 Mbps allocation, while a standard guest receives 5 Mbps. This approach is highly effective in Transport hubs and premium hospitality venues where differentiated service tiers are a competitive advantage.

Step 4: Gateway Traffic Shaping

Ensure that the upstream WAN link is protected. Implement traffic shaping at the edge firewall to guarantee bandwidth for critical VLANs before the traffic reaches the ISP. If the internet pipe is saturated, even the best wireless QoS configuration will fail to deliver a good user experience. This is the most frequently overlooked step in enterprise WiFi deployments.

Best Practices

Enable Airtime Fairness. In high-density environments, airtime fairness is more critical than raw throughput. This feature prevents older, slower devices (e.g., 802.11b/g) from monopolising the radio time, ensuring that modern devices receive their fair share of transmission opportunities. Without it, a single legacy device transmitting at 1 Mbps can reduce the effective throughput of an entire AP sector.

Disable Lower Data Rates. Force older devices off the network or onto the 2.4 GHz band by disabling data rates below 12 Mbps or 24 Mbps on the 5 GHz radio. This keeps the 5 GHz band clear for faster, more efficient transmissions and reduces the overhead of managing low-rate clients.

Implement Band Steering. Actively encourage dual-band capable clients to connect to the less congested 5 GHz or 6 GHz bands, leaving the 2.4 GHz band for legacy devices and IoT sensors. This is particularly relevant in large-area deployments — see the Indoor Positioning System: UWB, BLE, & WiFi Guide for more on managing mixed-device environments.

Monitor and Iterate. Bandwidth management is not a 'set and forget' configuration. Utilize WiFi Analytics dashboards to monitor application usage trends, peak concurrency periods, and per-SSID throughput. Adjust shaping policies as user behaviour evolves — a retail venue's traffic profile in December will differ significantly from its profile in July.

Protect Healthcare Environments. In clinical settings, the stakes are considerably higher. Refer to WiFi in Hospitals: A Guide to Secure Clinical Networks for specific guidance on managing bandwidth in environments where medical device communication must be guaranteed.

Troubleshooting & Risk Mitigation

When bandwidth management policies fail, the symptoms often manifest as intermittent connectivity, dropped VoIP calls, or slow captive portal rendering. The following are the most common failure modes and their remediation.

Asymmetric QoS. DSCP tags are often stripped by the ISP or not honoured across the WAN link. Ensure that traffic shaping is applied at the egress point of your network to control the flow before it leaves your administrative domain. Do not rely on the ISP to honour your internal DSCP markings.

Over-Subscribed APs. Rate limiting will not solve physical layer congestion. If an AP has too many associated clients — typically more than 50–75 active devices on a single radio — the overhead of managing the connections will degrade performance regardless of bandwidth caps. In such cases, physical network redesign and additional AP deployment is required.

Captive Portal Timeouts. If bandwidth limits are set too low (below 1 Mbps), the initial redirect to the captive portal may time out, preventing users from authenticating. Ensure that the pre-authentication 'walled garden' state has sufficient bandwidth allocated to load the portal assets quickly. A minimum of 2 Mbps for the authentication flow is recommended.

VLAN Misconfiguration. Incorrect VLAN tagging at the switch port connected to the AP can cause traffic from different SSIDs to bleed into the wrong network segment, bypassing QoS and security policies. Always validate VLAN assignments with a packet capture before going live.

ROI & Business Impact

Effective bandwidth management directly impacts the bottom line by deferring costly WAN circuit upgrades and improving operational efficiency. By prioritizing critical applications, venues ensure that revenue-generating systems — such as POS terminals, ordering apps, and staff communication tools — remain functional even during peak guest usage.

Furthermore, tiered bandwidth models offer direct monetization opportunities. Venues can provide a basic tier of internet access for free, while offering a premium, high-speed tier in exchange for loyalty programme registration, driving measurable ROI from the wireless infrastructure. This model is increasingly common in Hospitality and Transport environments.

For a broader overview of initial deployment considerations, refer to How to Set Up WiFi for Your Business: A Complete Guide .

Key Terms & Definitions

Quality of Service (QoS)

A set of technologies and policies that prioritize certain types of network traffic over others to ensure consistent performance for critical applications during periods of congestion.

Essential for ensuring VoIP calls and POS transactions are not delayed by guest downloads on a shared network.

Traffic Shaping

The practice of regulating network data transfer to enforce a guaranteed level of performance, typically by delaying or dropping packets that exceed a defined rate for a specific application or flow.

Used at the gateway to throttle high-bandwidth applications like streaming video before they saturate the internet connection.

Per-User Rate Limiting

A policy that caps the maximum upload and download throughput for an individual client device on the network, regardless of the total available bandwidth.

The primary defence against a single user monopolising the wireless network in a public venue. Applied at the AP or WLC level.

Deep Packet Inspection (DPI)

Advanced network packet filtering that examines the data payload of a packet as it passes an inspection point, enabling application-level identification beyond simple port and protocol analysis.

Allows IT teams to throttle 'Netflix' specifically, rather than blindly throttling all HTTPS traffic on port 443.

Airtime Fairness

A wireless feature that allocates equal transmission time to all connected clients, regardless of their theoretical data rate, preventing slower devices from monopolising the radio medium.

Critical in high-density environments. Without it, a single legacy 802.11g device can reduce effective throughput for all modern devices on the same AP.

Differentiated Services Code Point (DSCP)

A 6-bit field in the IP header used to classify network traffic into service classes, enabling routers and switches to apply Quality of Service policies.

The standard Layer 3 method for tagging packets so that switches and routers know which traffic is high priority. DSCP EF is used for VoIP; DSCP CS1 for background traffic.

Wi-Fi Multimedia (WMM)

A Wi-Fi Alliance specification based on the IEEE 802.11e standard that provides four access categories (Voice, Video, Best Effort, Background) for wireless QoS prioritization.

Translates Layer 3 DSCP tags into Layer 2 wireless priorities, ensuring that VoIP packets are transmitted before web browsing packets at the radio level.

Band Steering

A technique used by Access Points to encourage dual-band capable client devices to connect to the less congested 5 GHz or 6 GHz bands instead of the 2.4 GHz band.

Improves overall network capacity and performance by utilizing the wider channels available in higher frequency bands, leaving 2.4 GHz for legacy devices and IoT sensors.

Vendor-Specific Attribute (VSA)

An extension to the RADIUS protocol that allows network vendors to define custom attributes returned during authentication, such as bandwidth limits or VLAN assignments.

Used by platforms like Purple to dynamically assign per-user bandwidth policies at the point of captive portal authentication.

Case Studies

A 500-room hotel is experiencing poor performance on their Point-of-Sale (POS) tablets in the restaurant during the evening, coinciding with peak guest streaming activity in the rooms. The WAN link is 500 Mbps and is running at 90% utilisation during peak hours.

- Segregate POS traffic onto a dedicated operational VLAN (e.g., VLAN 10), separate from the guest VLAN (VLAN 20). 2. Configure the wireless LAN controller to map POS device traffic to DSCP EF (Expedited Forwarding) and ensure the WMM Voice (VO) queue is used for this SSID. 3. Implement DPI at the edge firewall to identify and throttle streaming video applications (Netflix, YouTube, Disney+) on the guest VLAN to a maximum of 3 Mbps per flow, ensuring standard definition playback while preserving WAN bandwidth. 4. Apply a per-user rate limit of 10 Mbps on the guest SSID to prevent any single device from consuming a disproportionate share of the circuit. 5. Configure the gateway to guarantee a minimum of 50 Mbps for the operational VLAN before any bandwidth is allocated to guest traffic.

A large retail chain with 150 stores wants to offer free WiFi to shoppers but needs to ensure the network isn't abused by neighbouring businesses or residents, and that the connection remains usable for the in-store digital signage system.

- Deploy a captive portal requiring SMS or email authentication to deter casual abuse and capture customer data for CRM. 2. Apply a strict per-user rate limit of 3 Mbps down / 1 Mbps up on the guest SSID. 3. Implement a session timeout of 2 hours, requiring re-authentication to continue using the service — this prevents all-day squatters. 4. Block known peer-to-peer (P2P) file-sharing protocols at the gateway firewall. 5. Place the digital signage system on a dedicated SSID mapped to a separate VLAN with a guaranteed minimum bandwidth allocation of 20 Mbps, completely isolated from the guest network. 6. Use Purple's analytics platform to monitor peak usage periods and adjust rate limits by time-of-day if required.

Scenario Analysis

Q1. A conference centre expects 2,000 attendees for a technology summit. The WAN connection is 1 Gbps. Assuming a 50% device concurrency rate, what is the maximum per-user rate limit you should configure on the guest SSID, and what additional controls would you implement to protect the WAN link?

💡 Hint:Calculate available bandwidth per concurrent user, then consider that not all users download simultaneously at their maximum rate.

Show Recommended Approach

With 2,000 attendees and a 50% concurrency rate, expect approximately 1,000 active devices. The theoretical maximum per-user limit to avoid saturating the 1 Gbps WAN link is 1 Mbps (1,000 Mbps / 1,000 users). However, since not all users download at their peak rate simultaneously, a practical limit of 5–10 Mbps per user is appropriate, combined with DPI-based throttling of streaming video to 3 Mbps per flow and a Scavenger-class policy for bulk downloads. Gateway traffic shaping should reserve a minimum of 100 Mbps for any operational VLANs.

Q2. You are deploying a new wireless network in a hospital. Clinical staff use VoIP communication badges that operate on the 5 GHz band. How should you configure the QoS policies at both the wireless (Layer 2) and wired (Layer 3) layers to ensure reliable communication?

💡 Hint:Consider both the wireless (Layer 2 WMM) and wired (Layer 3 DSCP) prioritization mechanisms, and ensure they are consistent end-to-end.

Show Recommended Approach

Configure the VoIP badges or their associated SSID to tag outbound traffic with DSCP EF (46). On the WLC and APs, map DSCP EF to the WMM Voice (VO) hardware queue. Ensure that the wired switches connecting the APs to the distribution layer are configured to trust DSCP markings from the AP uplink ports, and that the voice traffic is placed into the priority queue across the entire LAN. At the gateway, guarantee a minimum bandwidth allocation for the clinical VLAN. See also the guidance in WiFi in Hospitals: A Guide to Secure Clinical Networks for additional clinical-specific considerations.

Q3. A retail store manager reports that guest WiFi is slow, but monitoring shows the WAN link is only at 20% utilisation. The AP in the main seating area has 150 associated clients, many of which are older smartphones. What is the likely root cause and the recommended remediation?

💡 Hint:The issue is not raw bandwidth availability — it is wireless medium contention at the radio layer.

Show Recommended Approach

The AP is suffering from airtime congestion, exacerbated by older 802.11b/g devices transmitting at slow data rates (1–11 Mbps). Each slow transmission occupies the radio medium for a disproportionately long time, reducing the effective throughput for all other clients. The solution is: (1) enable Airtime Fairness to allocate equal transmission time rather than equal data volume; (2) disable data rates below 12 Mbps on the 5 GHz radio to force clients to transmit faster or roam to a closer AP; (3) review the AP placement and consider adding additional APs to reduce the client load per radio below 50 active devices.