Generative AI for Captive Portal Copy and Creative

This technical reference guide details how enterprise IT and marketing teams can leverage Generative AI to rapidly draft, deploy, and A/B test captive portal copy and creative. It provides a practical workflow for integrating LLM-generated variants with the Purple portal builder to optimise guest WiFi conversion rates while maintaining brand safety and network performance. Venue operators across hospitality, retail, and events will find concrete implementation steps, worked examples, and guardrails to deploy this capability this quarter.

🎧 Listen to this Guide

View Transcript

Executive Summary

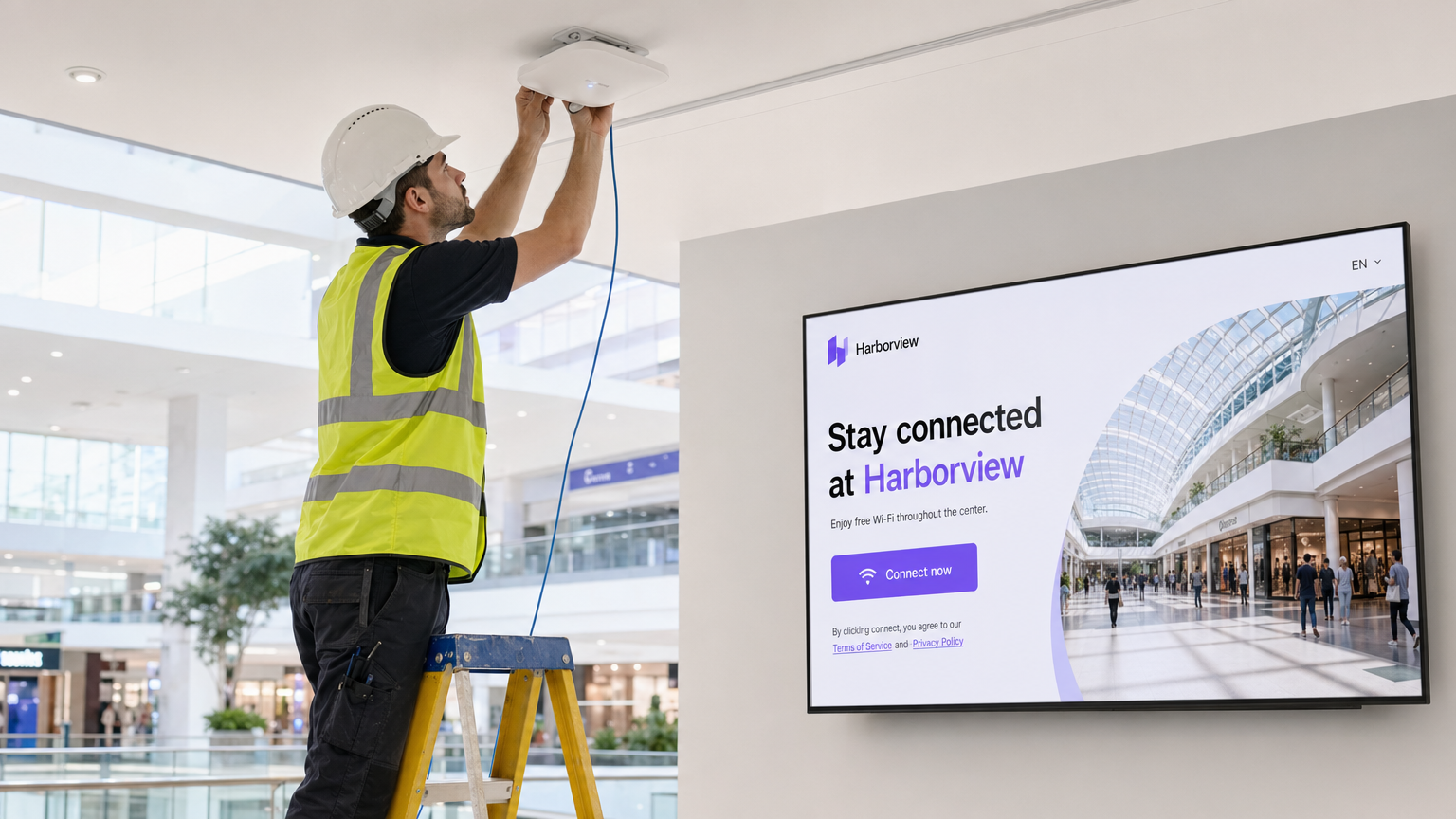

For enterprise venues — from expansive Retail environments to complex Hospitality deployments — the captive portal is a critical first-party data collection touchpoint. Historically, updating portal copy and creative has been a bottleneck, requiring IT intervention for every marketing request. In 2026, Generative AI is transforming this workflow. Marketing teams are now using large language models (LLMs) to generate high-converting landing page copy, promotional offers, and creative variants, which are then deployed directly via the Purple portal builder for rigorous A/B testing.

This guide outlines the technical architecture, implementation steps, and brand-safety guardrails required to successfully deploy AI captive portal copy at scale. The core principle is straightforward: the AI operates entirely offline as a drafting engine, while the live portal is served via standard, optimised HTML/CSS on Purple's edge infrastructure. This means there is no impact on network throughput or portal load times. Marketing teams gain the ability to iterate rapidly on messaging; IT teams retain full control over the underlying infrastructure.

Technical Deep-Dive

When a client device connects to a public SSID, the network infrastructure intercepts the initial HTTP request — typically a captive portal detection URL such as connectivitycheck.gstatic.com or captive.apple.com — and redirects the user to the portal splash page. This redirect must complete in milliseconds to prevent timeouts and ensure a seamless user experience, particularly in high-density environments like stadiums or Transport hubs where hundreds of concurrent associations are common.

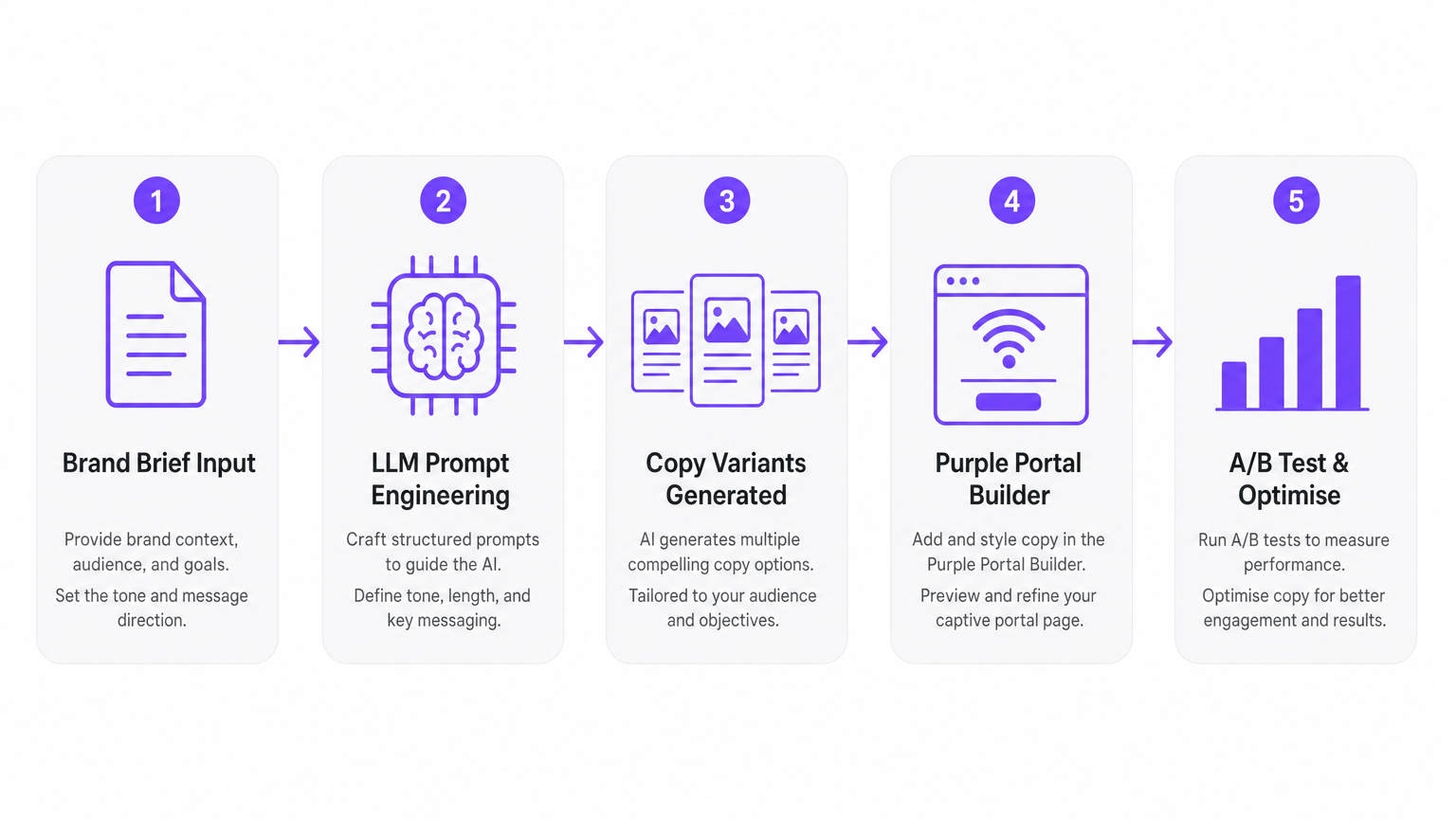

The integration of Generative AI occurs before this real-time interaction. The AI is not generating copy on the fly as the user connects; rather, it functions as an offline drafting engine. Marketing teams input structured prompts into an LLM — such as OpenAI's GPT-4.1 or Anthropic's Claude 3.5 — to generate multiple variants of headlines, body copy, and calls-to-action (CTAs). These variants are then reviewed by a human, approved, and loaded into the Purple portal builder as distinct A/B test variants.

The five-stage workflow is as follows:

| Stage | Owner | Action |

|---|---|---|

| 1. Brand Brief Input | Marketing | Define tone, offer, character limits, and audience |

| 2. LLM Prompt Engineering | Marketing | Craft structured prompts and generate variants |

| 3. Human Review | Brand/Compliance | Approve, reject, or edit AI-generated copy |

| 4. Portal Builder Deployment | Marketing (no IT required) | Input variants into Purple portal builder |

| 5. A/B Test and Optimise | Marketing + Analytics | Monitor metrics and deploy winning variant |

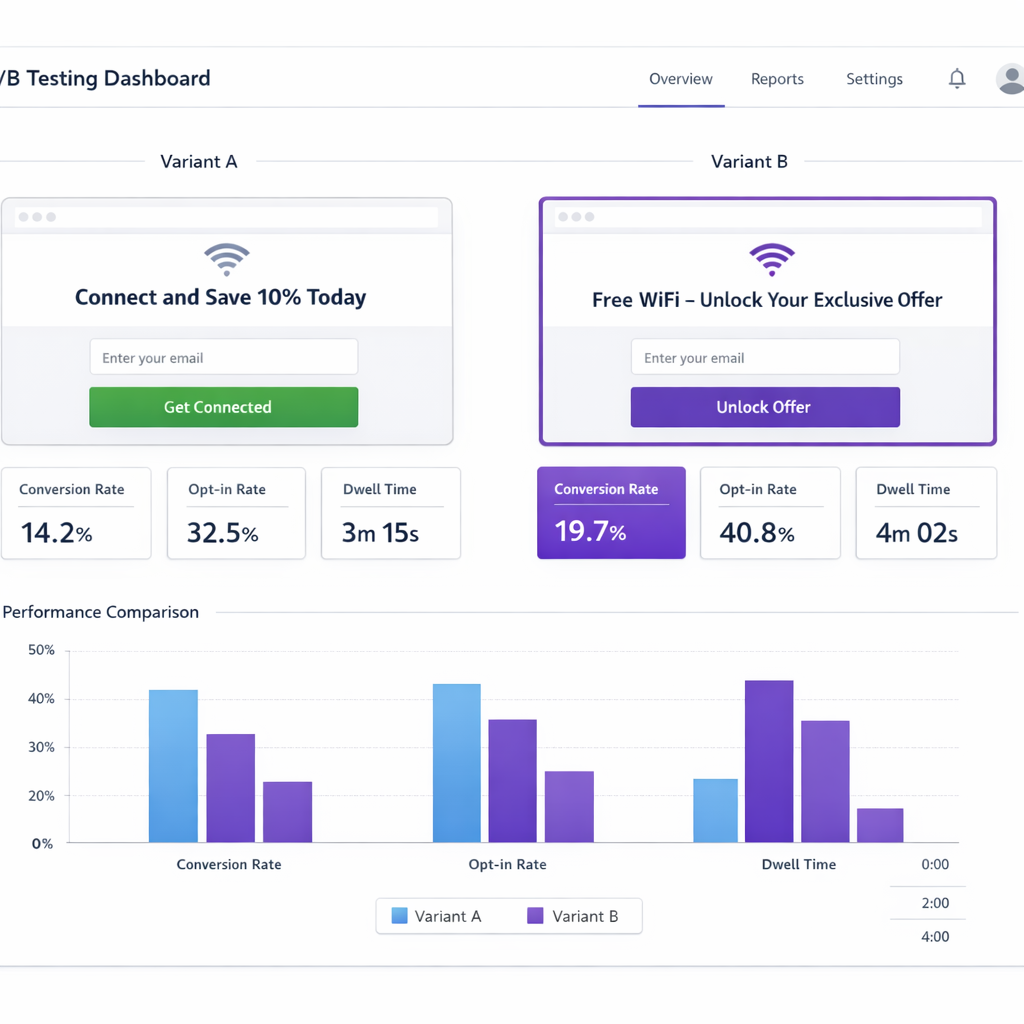

When deploying these variants, the Purple platform handles traffic allocation — for example, a 50/50 split for a standard A/B test — and tracks performance metrics including opt-in rate, conversion rate, and dwell time. The data collected via the portal flows seamlessly into WiFi Analytics , allowing teams to measure the impact of the AI-generated copy with statistical rigour.

From a network architecture perspective, this workflow is entirely transparent to the infrastructure layer. The portal pages are pre-rendered HTML/CSS assets. There is no runtime LLM inference occurring on the network path. This ensures compliance with performance requirements and does not introduce any new attack surface or latency into the authentication flow. For context on how captive portals interact with the underlying network protocols, refer to WISPr and Captive Portal Auto-Login: What Still Works in 2026 .

Implementation Guide

Deploying a GenAI workflow for captive portals requires a structured approach to prompt engineering, human review, and deployment cadence. The following steps provide a vendor-neutral implementation path.

Step 1: Define the Brand Brief and Constraints

Before touching an LLM, document the constraints. This includes the brand voice (e.g., 'professional and welcoming, not casual'), maximum character counts for headlines (typically 40–60 characters for mobile legibility), the specific offer or value proposition, and any prohibited phrases or claims. This brief becomes the foundation of every prompt.

Step 2: Craft the Prompt Architecture

A high-performing prompt for portal copy generation follows this structure:

"Act as a senior conversion copywriter. Generate [N] distinct headline variants for a [venue type] guest WiFi captive portal. The goal is to drive [specific action, e.g., email opt-in] in exchange for [specific offer, e.g., 15% discount]. Each headline must be under [X] characters. Tone: [brand voice]. Do not use the following phrases: [prohibited list]. Output as a numbered list."

The more specific the constraints, the more deployable the output. Vague prompts produce vague copy.

Step 3: Human Review — Non-Negotiable

Every AI-generated variant must pass through a human review before deployment. The reviewer checks for factual accuracy (can the venue actually fulfil the offer?), brand alignment, and GDPR compliance (does the copy imply data collection that is not configured in the portal?).

Step 4: Deploy via Purple Portal Builder

Input the approved variants into the Purple portal builder. Create an A/B test campaign with even traffic allocation. Set a minimum test duration — typically 7–14 days for venues with consistent daily footfall — to achieve statistical significance.

Step 5: Monitor and Iterate

Use Purple's analytics dashboard to track conversion rates, opt-in rates, and time-to-connect. Critically, monitor network metrics alongside marketing metrics. A portal page that converts well but causes connection timeouts is not a success.

Best Practices

Several principles consistently separate high-performing GenAI portal deployments from those that fail to deliver measurable results.

Constrain the AI aggressively. The LLM's job is not to be creative in an open-ended sense; it is to produce short, specific, actionable copy within tight parameters. Character limits, tone guidelines, and prohibited phrase lists are not optional extras — they are the core of effective prompt engineering.

Test one variable at a time. When running an A/B test, change only a single element — the headline, the CTA button text, or the background image — not multiple elements simultaneously. If you change three things at once, you cannot attribute the performance difference to any specific change. This is the most common mistake made by teams new to portal optimisation.

Prioritise mobile legibility. The vast majority of captive portal interactions occur on mobile devices. AI-generated copy that reads well on a desktop preview may be truncated or illegible on a 375px-wide smartphone screen. Always review variants on a mobile device before deployment.

Maintain a copy library. Store all AI-generated variants — including those that were rejected or underperformed — in a structured library. Over time, this library reveals patterns about what messaging resonates with your specific audience, which in turn improves the quality of future prompts.

Align copy with data collection configuration. If the portal is configured to collect email addresses only, the copy must not imply SMS delivery, phone-based verification, or any other data type. Misalignment between copy promises and technical configuration is a GDPR compliance risk.

Troubleshooting & Risk Mitigation

The primary risk associated with using GenAI for captive portal copy is brand safety. LLMs can generate plausible-sounding but factually incorrect or inappropriate content — a phenomenon known as hallucination. A hotel that inadvertently deploys AI-generated copy promising a free room upgrade to every WiFi user will face significant operational and reputational consequences. The mitigation is straightforward: never automate the publishing step. Always maintain a human-in-the-loop approval process.

A secondary risk is compliance drift. This occurs when AI-generated copy makes data collection promises that are inconsistent with the venue's actual data privacy policy or the technical configuration of the portal. For example, copy that says "Enter your details for personalised offers" may imply profiling that requires explicit GDPR consent beyond a simple opt-in checkbox. Marketing teams must work with their legal and compliance functions to define the boundaries of permissible copy before prompts are written.

A third operational risk is page weight and load time. If a new portal variant includes large hero images or complex layouts generated by AI creative tools, it may increase the page load time to the point where users experience connection timeouts, particularly on older devices or in areas with marginal signal strength. Always test new portal designs on a representative range of devices before full deployment. Monitor the time-to-connect metric in Purple's analytics dashboard as a leading indicator of this issue.

Finally, consider A/B test contamination. If the same user visits the venue multiple times during a test period, they may see different variants on different visits, which can skew the results. Purple's portal builder handles session management to mitigate this, but it is worth understanding the test methodology when interpreting results.

ROI & Business Impact

Implementing a GenAI workflow for captive portal copy delivers measurable ROI across two dimensions: operational efficiency and conversion performance.

On the efficiency side, the primary gain is the decoupling of content updates from IT operations. In a traditional workflow, every portal copy change requires a ticket to the IT team, a development cycle, and a deployment. With a GenAI workflow, marketing can draft, review, and deploy new variants in hours rather than weeks. For a large retail chain running seasonal promotions, this translates directly into faster time-to-market for offers.

On the conversion side, the ability to run continuous A/B tests means that portal performance improves iteratively over time. Industry benchmarks suggest that optimised captive portal copy can increase WiFi opt-in rates by 15–30% compared to static, unoptimised pages. For a venue with 10,000 daily WiFi users and a baseline opt-in rate of 20%, a 5-percentage-point improvement translates to 500 additional marketable contacts per day — or approximately 180,000 additional contacts per year.

For Healthcare facilities and public-sector organisations, the ROI calculation extends beyond marketing metrics to include patient or citizen engagement, service awareness, and the quality of first-party data available for service planning. The Guest WiFi platform provides the infrastructure to capture and activate this data at scale.

Key Terms & Definitions

Captive Portal

A web page that a connecting device is automatically redirected to before being granted access to a public WiFi network. It typically requires the user to accept terms of service, authenticate, or provide contact details.

The captive portal is the primary digital touchpoint for guest WiFi. It is where marketing data capture occurs and where AI-generated copy has the most direct impact on opt-in rates.

Generative AI (GenAI)

A class of artificial intelligence systems capable of generating novel text, images, or other media in response to structured natural language prompts. Large language models (LLMs) such as GPT-4 and Claude are the primary tools used for copy generation.

Used by marketing teams as an offline drafting engine to rapidly produce multiple variants of portal copy for A/B testing, without requiring IT involvement.

A/B Testing

A controlled experiment in which two or more variants of a web page are served to randomly allocated segments of users to determine which variant achieves a higher rate of a target action (e.g., email opt-in).

The primary method for measuring the performance of AI-generated portal copy variants. Requires a minimum sample size and test duration to achieve statistical significance.

Prompt Engineering

The practice of structuring natural language instructions to guide a generative AI model towards producing a specific, constrained output. Effective prompts for portal copy specify tone, length, audience, offer, and prohibited content.

The quality of the prompt directly determines the deployability of the AI output. Vague prompts produce vague copy; constrained prompts produce actionable variants.

Conversion Rate

The percentage of users who complete a desired action — such as submitting an email address — out of the total number of users who viewed the portal page.

The primary metric used to evaluate the performance of AI-generated portal copy variants in an A/B test.

Hallucination

A phenomenon in which a generative AI model produces plausible-sounding but factually incorrect, fabricated, or inappropriate content.

The primary brand safety risk of using GenAI for portal copy. Mitigated by mandatory human review before any AI-generated variant is deployed to the live portal.

WISPr (Wireless Internet Service Provider Roaming)

A protocol that defines how devices detect and interact with captive portals on public WiFi networks. Devices send HTTP requests to known detection URLs; if intercepted, the network redirects the client to the portal page.

Understanding WISPr is important for diagnosing portal detection issues and ensuring that new portal variants load correctly across all device types and operating systems.

Opt-in Rate

The percentage of users who explicitly consent to receive marketing communications during the WiFi login process, typically by providing an email address and ticking a consent checkbox.

A key performance indicator for marketing teams using the captive portal to build their first-party CRM database. Directly impacted by the quality and relevance of the portal copy.

Statistical Significance

A measure of the probability that the observed difference in performance between two A/B test variants is due to the change made, rather than random variation. Typically expressed as a p-value of less than 0.05.

A/B tests on captive portals must run for a sufficient duration and accumulate enough data points to achieve statistical significance before a winner is declared. Declaring a winner too early is a common mistake.

Human-in-the-Loop

A workflow design in which a human reviewer is a mandatory step in an automated or AI-assisted process, providing oversight and approval before outputs are acted upon.

The non-negotiable safeguard in any GenAI portal copy workflow. Ensures that AI-generated content is reviewed for brand safety, factual accuracy, and compliance before deployment.

Case Studies

A 200-room boutique hotel wants to increase breakfast upsells via their guest WiFi portal. The marketing team wants to run weekly offer changes, but the IT team is at capacity and cannot support frequent HTML updates.

The marketing manager uses a predefined LLM prompt template — specifying the hotel's brand voice, a 40-character headline limit, and the specific breakfast offer — to generate three distinct copy variants in under 10 minutes. After a quick review by the brand manager to confirm the offer details are accurate and GDPR-compliant, the three variants are input into the Purple portal builder. An A/B/C test is configured with 33% traffic allocation to each variant. The IT team is not involved in the update, as the underlying HTML structure and network configuration remain unchanged. After 10 days, the analytics dashboard shows Variant B ('Start your morning right — breakfast included') has a 23% higher opt-in rate than the control. The winning variant is deployed as the new default.

A large multi-use stadium needs to deploy contextually relevant portal copy for a music concert on Friday and a sporting event on Saturday. The venue operations team has 48 hours between events to update the portal.

The venue operations team maintains two pre-configured portal templates in the Purple portal builder — one for live music events and one for sporting events. For each event, they use a GenAI prompt to generate event-specific copy variants (e.g., 'Welcome to the Rock Tour — connect for set times and merch deals' versus 'Connect for live match stats and in-seat ordering'). The AI drafts three variants for each template in minutes. After human review, the approved variants are loaded into the respective templates. A scheduled cutover in the Purple dashboard switches the active portal template two hours before each event begins. Post-event analytics from the WiFi platform are used to compare opt-in rates across event types, informing future prompt refinement.

Scenario Analysis

Q1. A marketing manager at a large retail chain wants to use an AI-generated copy variant that promises 'Win a £500 shopping voucher — connect to enter!' on the captive portal. The IT team has not configured any competition entry mechanism in the portal. What are the immediate risks, and what should the review process catch?

💡 Hint:Consider the concepts of AI hallucination, brand safety, and the alignment between copy promises and technical portal configuration.

Show Recommended Approach

The immediate risks are twofold. First, brand safety: the AI has generated a compelling but undeliverable offer. If deployed, users will connect expecting to enter a competition that does not exist, resulting in reputational damage and potential consumer protection issues. Second, compliance: if the portal is not configured to capture the additional data required for a competition entry (e.g., full name, age verification), the copy is making a promise the technical system cannot fulfil. The human review step must catch this by cross-referencing the copy against the actual portal configuration and the marketing team's confirmed campaign plan. This is a textbook example of why the 'Draft with AI, Publish with Humans' rule is non-negotiable.

Q2. You are running an A/B test on a new captive portal design for a conference centre. Variant A has a new AI-generated headline ('Connect. Collaborate. Succeed.') and a purple CTA button. Variant B has the original headline ('Free WiFi — Connect Now') and a green CTA button. After 5 days, Variant A shows a 12% higher conversion rate. Can you conclude that the new headline is responsible for the improvement?

💡 Hint:Apply the 'Test One, Not a Ton' principle.

Show Recommended Approach

No. Because two variables were changed simultaneously — the headline and the CTA button colour — it is impossible to attribute the 12% improvement to either change specifically. The improvement could be entirely due to the button colour change, entirely due to the headline, or a combination of both. To determine which element is responsible, the test must be redesigned to isolate a single variable. Run Variant A (new headline, same green button) against the control, then separately test the button colour. Additionally, 5 days may not be sufficient to achieve statistical significance for a conference centre with variable daily footfall — the test duration should be extended.

Q3. A venue operations director reports that after deploying a new AI-generated portal page for a stadium event, the IT helpdesk received a spike in complaints that users 'couldn't connect to the WiFi'. The marketing team reports the new page has a high conversion rate among users who do successfully load it. What is the likely technical cause, and how should it be resolved?

💡 Hint:Consider the relationship between portal page weight, load time, and the captive portal detection timeout on mobile devices.

Show Recommended Approach

The most likely cause is that the new portal page is too heavy — it likely includes large, unoptimised images or complex layout elements generated by the AI creative workflow — causing the page to time out before it fully loads on mobile devices or in areas of the stadium with marginal signal coverage. The captive portal detection mechanism on iOS and Android has a short timeout window; if the page does not load within this window, the device may report that the network requires sign-in but then fail to display the portal, leaving the user unable to connect. The resolution is to immediately roll back to the previous portal page, then optimise the new page by compressing images, minifying CSS, and testing load times on a representative range of devices before redeployment. Network metrics — specifically time-to-connect — should always be monitored alongside marketing conversion metrics.