Latenzreduzierung in hochdichten WiFi-Netzwerken

Dieser Leitfaden beschreibt, wie die Eliminierung unnötiger DNS-Lookups für Tracking-Domains die Latenz in hochdichten WiFi-Netzwerken drastisch senkt. Er bietet umsetzbare Anleitungen zu Architektur, Implementierung und ROI für IT-Führungskräfte, die überlastete Veranstaltungsorte verwalten.

Diesen Leitfaden anhören

Podcast-Transkript ansehen

- Zusammenfassung für Führungskräfte

- Technischer Einblick

- Die Anatomie eines DNS-Abfragesturms

- Architektur für Edge-Auflösung

- Implementierungsleitfaden

- Schritt 1: Baseline-Audit

- Schritt 2: Bereitstellung eines lokalen Resolvers

- Schritt 3: Verwaltung von DNS over HTTPS (DoH)

- Best Practices

- Fehlerbehebung & Risikominderung

- ROI & Geschäftsauswirkungen

- Experten-Briefing Podcast

Zusammenfassung für Führungskräfte

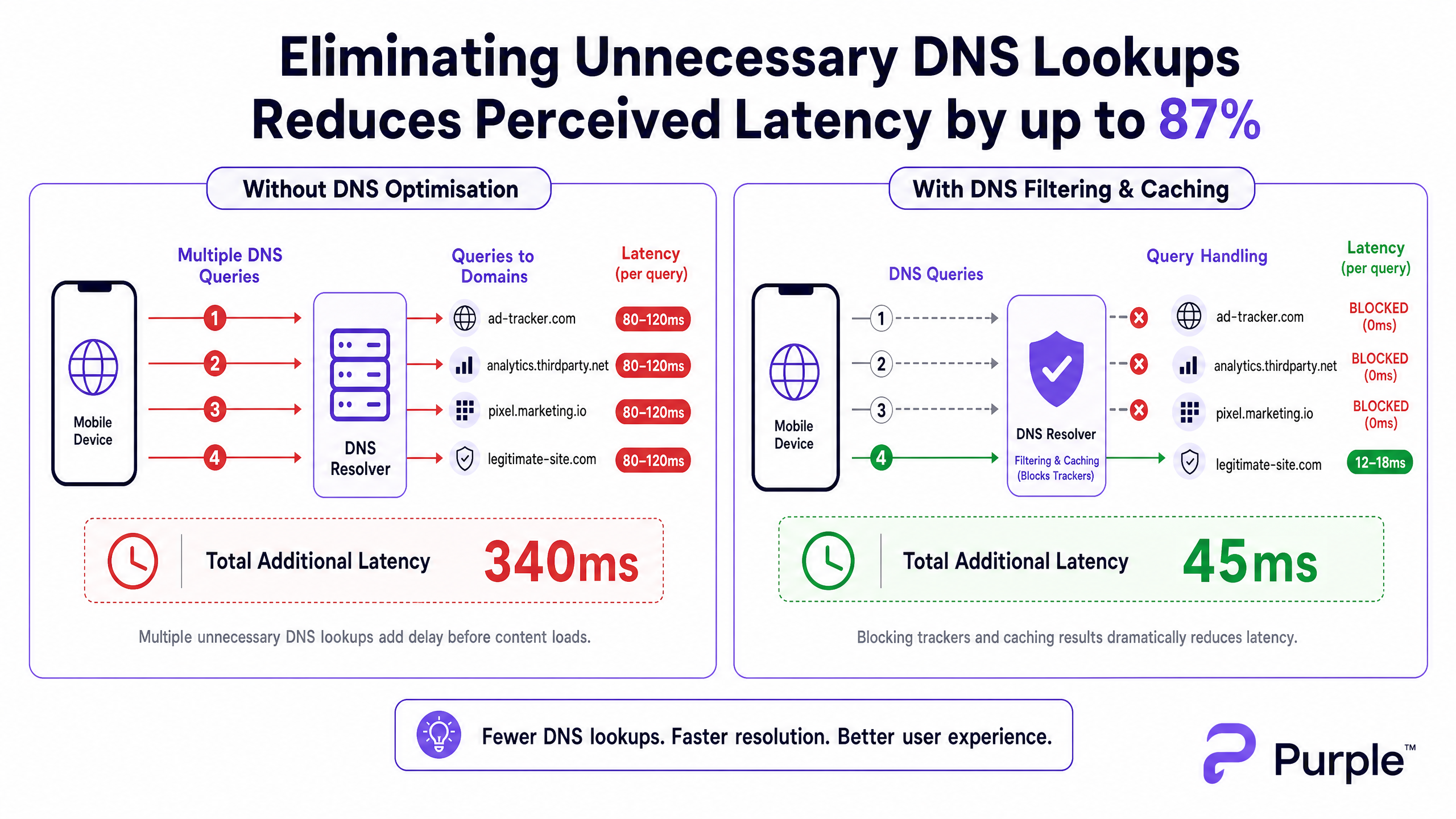

Für CTOs und Netzwerkarchitekten, die Umgebungen mit hoher Dichte wie Gastgewerbe -Veranstaltungsorte, Stadien und Einzelhandels -Immobilien verwalten, wird Latenz oft fälschlicherweise als reines RF- oder Backhaul-Problem diagnostiziert. Ein erheblicher Prozentsatz der wahrgenommenen Latenz in modernen WiFi-Netzwerken stammt jedoch von der DNS-Schicht. Wenn sich ein Benutzer mit Ihrem Gast-WiFi verbindet, kann ein einziger Seitenaufruf 20 bis 70 DNS-Abfragen auslösen, hauptsächlich für Tracking-Pixel von Drittanbietern, Werbenetzwerke und Telemetrie-Beacons. An einem überfüllten Veranstaltungsort erzeugt dies einen 'DNS-Abfragesturm', der lokale Resolver verstopft und wertvolle Sendezeit belegt.

Durch die Implementierung aggressiver lokaler DNS-Caches und das Filtern von Tracking-Domains am Netzwerkrand können Veranstaltungsorte ein sofortiges NXDOMAIN für unnötige Anfragen zurückgeben. Dieser Ansatz eliminiert den Roundtrip zum öffentlichen Internet und reduziert die wahrgenommene Latenz um bis zu 87 %. Dieser Leitfaden bietet die technische Architektur und den Implementierungsrahmen zur Bereitstellung von DNS-optimiertem WiFi, wodurch die Benutzererfahrung verbessert, Support-Tickets reduziert und eine nahtlose WiFi Analytics -Datenerfassung gewährleistet wird.

Technischer Einblick

Die Anatomie eines DNS-Abfragesturms

In einer hochdichten Bereitstellung mit 802.11ax (WiFi 6/6E) sind Effizienzmechanismen wie OFDMA und BSS Colouring darauf ausgelegt, Gleichkanalinterferenzen zu verwalten und die Sendezeit zu optimieren. Diese Mechanismen gehen jedoch davon aus, dass das Funkmedium tatsächliche Benutzerdaten überträgt. Wenn 3.000 Gäste in einem Hotel oder 10.000 Fans in einem Stadion gleichzeitig Webseiten laden, führt das schiere Volumen der DNS-Abfragen für nicht-essentielle Domains (z. B. ad-tracker.com, analytics.thirdparty.net) zu einem massiven Overhead.

Jede DNS-Abfrage, die an einen externen Resolver (wie den Standard-DNS eines ISPs oder Googles 8.8.8.8) gesendet wird, verursacht eine Roundtrip-Zeit von 80-150 ms in einem überlasteten Netzwerk. Wenn eine Seite 15 Tracking-Domain-Lookups erfordert, bevor Inhalte gerendert werden, erlebt der Benutzer über eine Sekunde 'unsichtbare' Verzögerung. Dies ist kein Durchsatzproblem; es ist ein Transaktionsengpass.

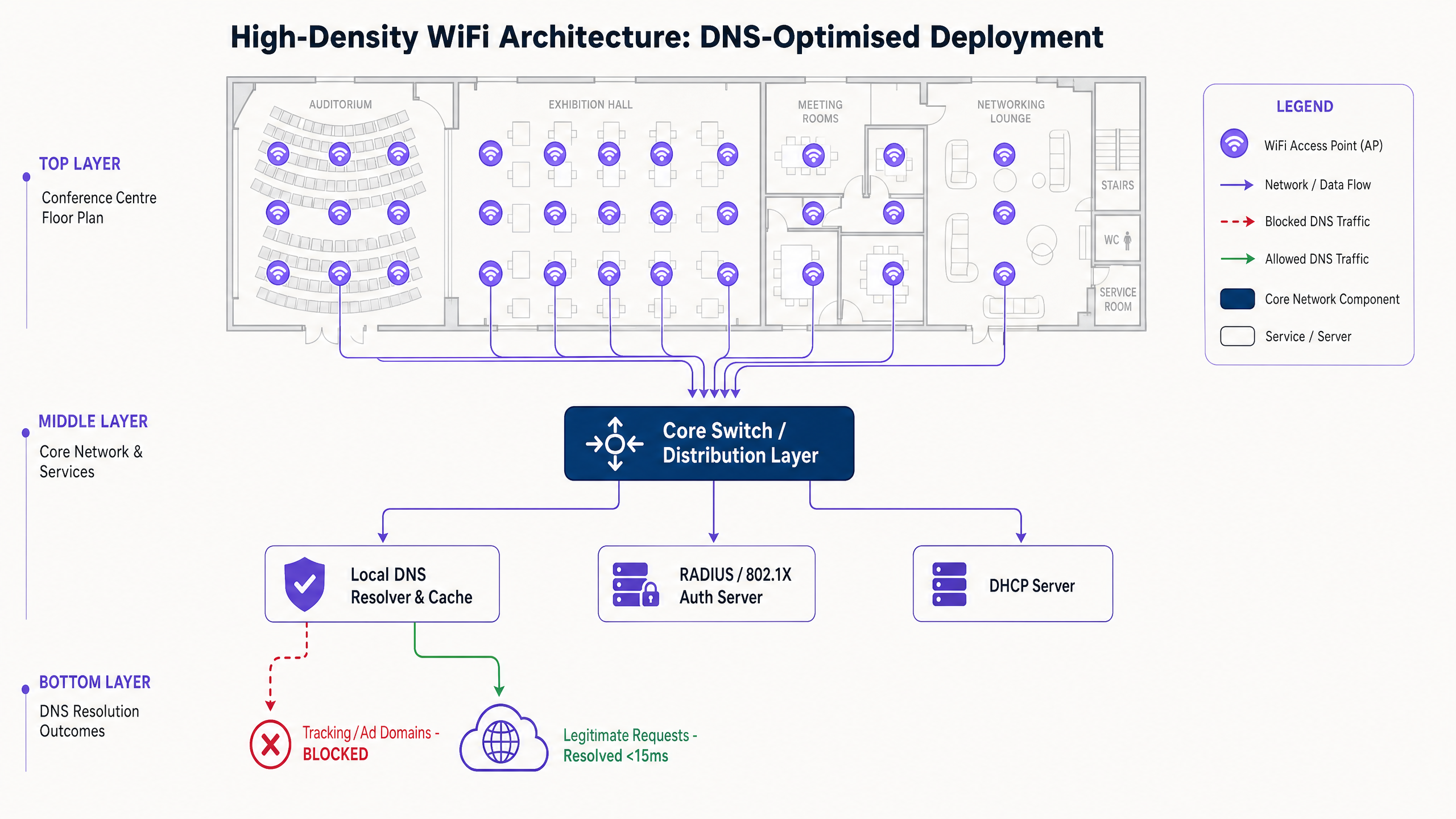

Architektur für Edge-Auflösung

Um dies zu mindern, muss die Architektur die Auflösung an den Netzwerkrand verlagern. Der Einsatz eines lokalen DNS-Resolvers mit einem aggressiven TTL-Cache stellt sicher, dass legitime, häufig angefragte Domains in unter 5 ms aufgelöst werden.

Entscheidend ist, dass dieser Resolver eine kuratierte Blockliste (z. B. Pi-hole Enterprise-Modus, Cisco Umbrella) integrieren muss, um Abfragen für bekannte Tracking-Domains zu verwerfen. Das Zurückgeben eines sofortigen NXDOMAIN gibt die Übertragungsmöglichkeit (TXOP) auf dem drahtlosen Medium frei, wodurch tatsächliche Nutzlastdaten schneller fließen können.

Implementierungsleitfaden

Schritt 1: Baseline-Audit

Bevor Sie den DNS-Pfad ändern, legen Sie eine Baseline fest. Instrumentieren Sie Ihren bestehenden Resolver oder setzen Sie einen passiven Tap ein, um Abfrageprotokolle während eines Spitzenlastfensters zu erfassen. Identifizieren Sie die 50 am häufigsten abgefragten Domains; typischerweise sind 30-50 % davon Tracking- oder Telemetriedienste.

Schritt 2: Bereitstellung eines lokalen Resolvers

Stellen Sie einen lokalen oder am Netzwerkrand gehosteten Resolver bereit. Konfigurieren Sie autoritative Zonen für interne Ressourcen (Split DNS) und wenden Sie eine konservative Blockliste an. Vermeiden Sie zunächst aggressive Listen, um das Funktionieren legitimer Anwendungen nicht zu beeinträchtigen.

Schritt 3: Verwaltung von DNS over HTTPS (DoH)

Moderne Betriebssysteme umgehen zunehmend lokale Resolver mithilfe von DoH. Um die Kontrolle zu behalten, fangen Sie DoH-Verkehr an der Firewall ab, indem Sie ausgehenden TCP/UDP 443 zu bekannten DoH-Anbietern blockieren und diese auf Ihren verwalteten DoH-Resolver umleiten. Für tiefere Implikationen lesen Sie unseren Leitfaden zu DNS Over HTTPS (DoH): Auswirkungen auf die Filterung von öffentlichem WiFi .

Best Practices

- Iteratives Blocklisting: Aktualisieren Sie Blocklisten wöchentlich über automatisierte Feeds, aber pflegen Sie einen schnellen Whitelist-Prozess für Fehlalarme.

- Compliance-Ausrichtung: Dokumentieren Sie die DNS-Filterung in den Nutzungsbedingungen Ihres Captive Portal. Dies entspricht der GDPR, indem die Datenerfassung durch Dritte aktiv reduziert wird.

- VLAN-Segmentierung: Testen Sie neue Blocklisten auf einem Staging-VLAN oder einer bestimmten Untergruppe von APs, bevor Sie sie standortweit einführen.

Fehlerbehebung & Risikominderung

- Anwendungsausfälle: Der häufigste Fehlerfall ist, dass eine legitime App fehlschlägt, weil eine Abhängigkeit blockiert wurde. Überwachen Sie die

NXDOMAIN-Spitzenraten; ein plötzlicher Anstieg deutet normalerweise auf einen Fehlalarm hin. - DoH-Bypass-Fehler: Wenn die Latenz trotz lokaler Filterung hoch bleibt, überprüfen Sie die Firewall-Protokolle auf verschlüsseltes DNS, das Ihre Abfangregeln umgeht.

- Cache Poisoning: Stellen Sie sicher, dass Ihr lokaler Resolver gegen Cache-Poisoning-Angriffe gehärtet ist, insbesondere in öffentlichen Transport - oder Gesundheitswesen -Bereitstellungen.

ROI & Geschäftsauswirkungen

Die Reduzierung der Latenz durch DNS-Optimierung wirkt sich direkt auf das Geschäftsergebnis aus. Für ein Hotel korrelieren schnellere Ladezeiten des Captive Portal und reaktionsschnelles Browsen direkt mit höheren TripAdvisor-Bewertungen. Für eine Einzelhandelsumgebung gewährleistet es eine nahtlose Integration mit Tools wie den Initiativen Purple Appoints Iain Fox as VP Growth – Public Sector to Drive Digital Inclusion and Smart City Innovation oder standortbasierten Diensten wie dem Purple Launches Offline Maps Mode for Seamless, Secure Navigation to WiFi Hotspots .

Indem DNS als kritische Infrastrukturschicht und nicht als nachträglicher Gedanke behandelt wird, können Veranstaltungsorte die maximale Leistung aus ihrer bestehenden RF-Hardware herausholen Investitionen.

Experten-Briefing Podcast

Hören Sie, wie unser leitender Berater die Mechanismen und Implementierungsstrategien für die DNS-Optimierung in Umgebungen mit hoher Dichte erläutert.

Schlüsseldefinitionen

DNS Query Storm

A massive, simultaneous spike in domain name resolution requests, typically occurring when hundreds of devices connect and load tracking-heavy web pages simultaneously.

Common in stadiums and hotels during peak ingress times, causing perceived network failure even when bandwidth is available.

NXDOMAIN

A DNS response code indicating that the requested domain name does not exist.

Used strategically in DNS filtering to instantly terminate requests for known tracking domains, saving latency and airtime.

DNS over HTTPS (DoH)

A protocol for performing remote Domain Name System resolution via the HTTPS protocol, encrypting the data between the DoH client and the DoH-based DNS resolver.

While good for consumer privacy, DoH can bypass corporate network controls and filtering, requiring specific firewall interception strategies.

TTL Cache (Time to Live)

A mechanism where a local DNS resolver stores the IP address of a recently resolved domain for a specified period, serving subsequent requests instantly without querying the authoritative server.

Crucial for reducing latency for legitimate, highly trafficked domains (e.g., google.com, netflix.com) in a venue.

Airtime Overhead

The proportion of wireless transmission capacity consumed by management frames, control frames, and transactional protocols (like DNS) rather than actual user payload data.

Reducing unnecessary DNS queries directly reduces airtime overhead, improving the efficiency of the entire AP cluster.

Split DNS

An implementation where different DNS responses are provided depending on the source IP address of the request, often used to resolve internal hostnames differently from external ones.

Necessary when a venue hosts local services (like a captive portal or local media server) that should not be resolved via the public internet.

BSS Colouring

A spatial reuse technique in 802.11ax (WiFi 6) that assigns a 'colour' (a number) to each Basic Service Set, allowing APs on the same channel to differentiate between their own traffic and overlapping network traffic.

A key RF optimisation feature that works best when the network isn't bogged down by unnecessary transactional overhead like excessive DNS lookups.

Passive DNS Tap

A method of monitoring DNS traffic by copying packets from a switch port (SPAN port) without interfering with the actual flow of traffic.

Used during the initial audit phase to understand query volume and identify the top tracking domains before implementing filtering.

Ausgearbeitete Beispiele

A 500-room resort hotel experiences severe 'slow WiFi' complaints during the 4:00 PM to 6:00 PM check-in window, despite having upgraded to WiFi 6 access points last year. Backhaul utilisation is only at 40%.

- Deploy a local caching DNS resolver (e.g., Unbound) on the guest VLAN. 2. Implement a conservative tracking domain blocklist. 3. Configure the DHCP server to assign the local resolver's IP to all guest clients. 4. Implement firewall rules blocking outbound port 53 to force all DNS traffic through the local resolver.

A large conference centre needs to implement DNS filtering to improve latency but is concerned about modern smartphones bypassing the local resolver using DNS over HTTPS (DoH).

- Identify the IP ranges of major public DoH providers (Cloudflare, Google, Quad9). 2. Create firewall rules blocking outbound TCP port 443 to these specific IP ranges. 3. Deploy a local DoH-capable resolver. 4. Use network policy (e.g., DHCP Option 6) to direct clients to the managed DoH resolver.

Übungsfragen

Q1. You are managing a stadium WiFi network. During halftime, users report slow loading times. Dashboard metrics show AP CPU utilisation is low, and backhaul bandwidth is at 30% capacity. What is the most likely cause, and what is the immediate mitigation?

Hinweis: Consider the transactional volume that occurs when 15,000 people open their phones simultaneously.

Musterlösung anzeigen

The most likely cause is a DNS query storm overwhelming the local resolver or upstream ISP resolver. The immediate mitigation is to verify the local resolver's cache hit rate and ensure that a blocklist for high-volume tracking domains is active, instantly returning NXDOMAIN to reduce the query load.

Q2. A retail chain implements local DNS filtering to block tracking domains. A week later, the marketing team complains that their new in-store analytics app is failing to load on the guest WiFi. How do you resolve this while maintaining latency benefits?

Hinweis: Filtering is not a set-and-forget configuration.

Musterlösung anzeigen

Review the DNS query logs for the specific devices or timeframes when the app failed. Identify the blocked domain that the app depends on (a false positive). Add this specific domain to the resolver's whitelist, ensuring the app functions while the rest of the tracking domains remain blocked.

Q3. You deploy a local DNS resolver with aggressive caching and filtering in a public sector building. However, packet captures show a significant volume of DNS traffic still leaving the network on port 443. What is happening, and how do you enforce local policy?

Hinweis: Modern browsers use encrypted protocols to bypass standard port 53 DNS.

Musterlösung anzeigen

Devices are using DNS over HTTPS (DoH) to bypass the local resolver. To enforce policy, you must configure the firewall to block outbound TCP/UDP port 443 traffic destined for known public DoH provider IP ranges (e.g., Cloudflare, Google), forcing devices to fall back to the DHCP-provided local resolver.