Railway WiFi Network: How Operators Are Delivering Connectivity at Speed

This technical reference guide provides actionable insights for IT leaders, network architects, and transport operations directors on architecting and deploying reliable railway WiFi networks. It covers the full stack from lineside infrastructure and multi-bearer aggregation to bandwidth management, captive portals, and passenger analytics. The guide demonstrates how operators can move beyond treating onboard WiFi as a cost centre and instead leverage it as a strategic asset that generates first-party data, operational intelligence, and measurable ROI.

🎧 Listen to this Guide

View Transcript

- Executive Summary

- Technical Deep-Dive

- The Multi-Bearer Backhaul Architecture

- Lineside Infrastructure (Track-to-Train)

- Onboard Distribution and Hardware Standards

- Implementation Guide

- Step 1: RF Survey and Backhaul Assessment

- Step 2: Hardware Procurement and Installation

- Step 3: Captive Portal and Bandwidth Management Configuration

- Step 4: NOC Integration and Monitoring

- Best Practices

- Troubleshooting & Risk Mitigation

- The Station Surge Effect

- Inter-Carriage Cabling Failures

- Backhaul Saturation During Tunnel Egress

- ROI & Business Impact

Executive Summary

Delivering reliable WiFi on moving trains is one of the most complex challenges in enterprise networking. For IT managers, network architects, and venue operations directors, passenger connectivity is no longer a luxury — it is a baseline expectation that directly impacts customer satisfaction and brand perception.

This guide outlines the technical architecture required to maintain high-speed connectivity at 125 mph, navigating constant cell tower handoffs, Faraday cage effects from metal carriages, and fluctuating user densities. We explore the transition from simple cellular routers to multi-bearer aggregation gateways and dedicated lineside infrastructure. Crucially, we examine how operators can utilise captive portals and analytics platforms — such as Guest WiFi and WiFi Analytics — to manage bandwidth, ensure GDPR compliance, and extract actionable first-party data. By treating the onboard network not just as a cost centre, but as a strategic asset, transport operators can drive significant ROI while meeting the modern passenger's digital demands.

Technical Deep-Dive

Architecting a railway WiFi network requires a fundamental shift from static enterprise LAN design. The network must bridge the gap between a rapidly moving local environment and the core internet backhaul, all while maintaining session continuity for hundreds of concurrent users.

The Multi-Bearer Backhaul Architecture

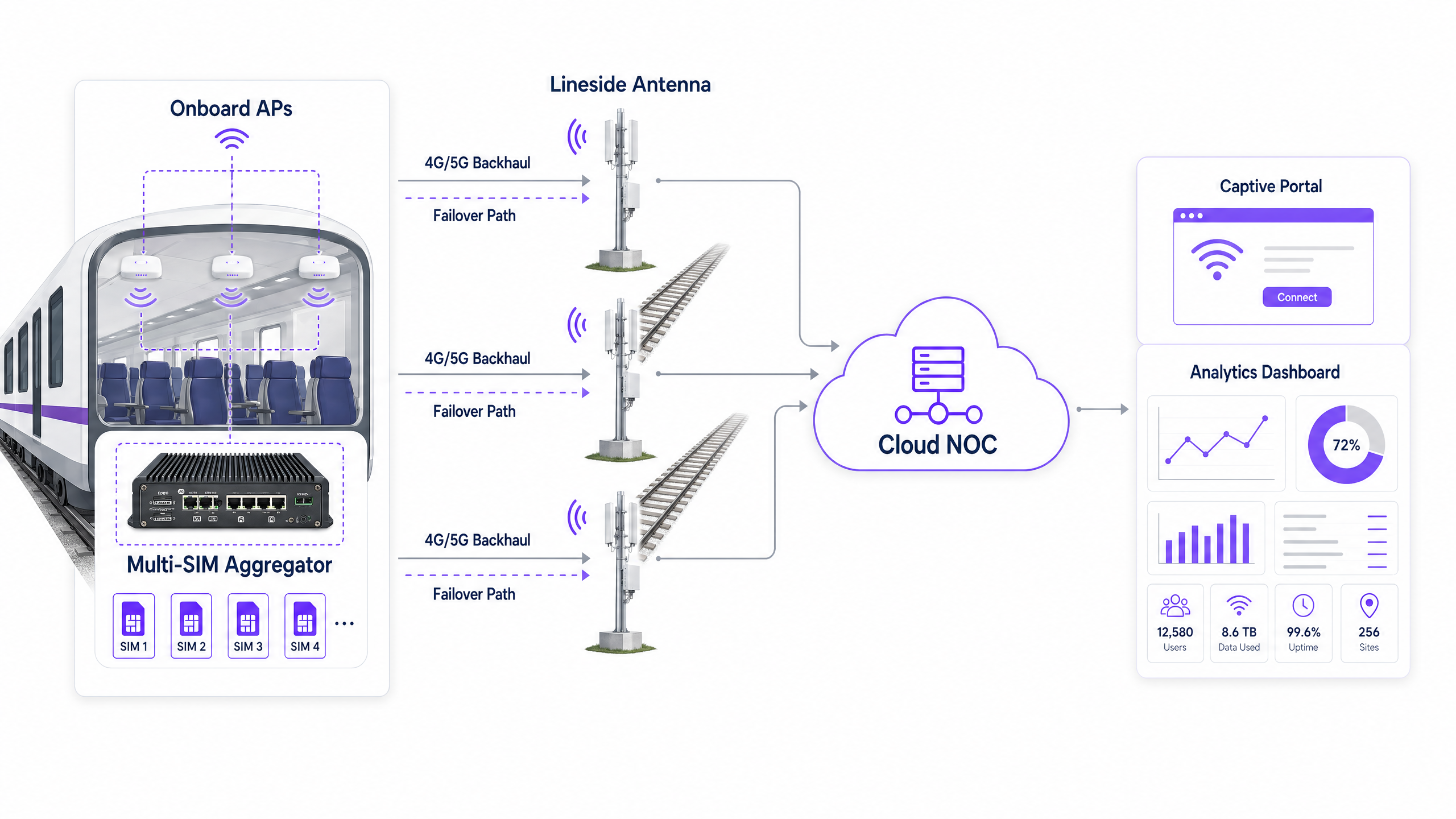

Relying on a single cellular provider is insufficient for a moving train. Modern deployments utilise a multi-SIM aggregation gateway (or multi-bearer router) installed on the train. This device bonds connections from multiple Mobile Network Operators (MNOs) across 4G and 5G networks simultaneously.

As the train traverses different coverage zones, the aggregator dynamically routes traffic across the available links based on real-time latency, packet loss, and signal strength metrics. If one carrier drops signal in a tunnel or rural cutting, the others maintain the session, providing seamless failover with no perceptible interruption to the passenger. This is the single most important architectural decision in any railway WiFi deployment.

Lineside Infrastructure (Track-to-Train)

For high-density commuter routes where public cellular networks become congested during peak hours, operators are investing in dedicated lineside infrastructure. This involves deploying trackside antennas — typically at intervals of 500 metres to 2 kilometres depending on the technology — that transmit a dedicated signal using mmWave or proprietary 5G spectrum directly to receivers mounted on the exterior of the train carriages.

This approach bypasses public cellular congestion entirely, offering guaranteed throughput. The trade-off is significant capital expenditure in the trackside build, but for high-revenue intercity routes the business case is compelling. A key consideration is the Doppler shift effect: at speeds above 100 mph, the radio frequency perceived by the receiver differs from the transmitted frequency, requiring specialised radio equipment designed specifically for high-speed mobility scenarios.

Onboard Distribution and Hardware Standards

Once the backhaul is secured, the signal is distributed via an onboard Ethernet backbone to Wireless Access Points (APs) in each carriage. Hardware deployed on trains must adhere to strict environmental standards, specifically EN 50155. This standard dictates the requirements for electronic equipment used on rolling stock, ensuring resilience against extreme temperature variations (typically -25°C to +70°C), humidity, shock, and vibration.

APs typically require M12 industrial connectors rather than standard RJ45 ports to prevent disconnections due to vibration. Wi-Fi 6 (802.11ax) is now the recommended standard for new deployments, offering improved performance in high-density environments through technologies like OFDMA and BSS Colouring.

The onboard LAN topology is equally important. A daisy-chain approach creates single points of failure at every inter-carriage connection. The recommended architecture is a redundant ring topology, where a break in any single cable segment is automatically bypassed by routing traffic in the opposite direction around the ring.

Implementation Guide

Deploying a railway WiFi service requires careful planning and phased execution. The following steps provide a practical framework for IT teams.

Step 1: RF Survey and Backhaul Assessment

Before hardware selection, conduct a comprehensive RF survey of the entire train route. Map the signal strength and data throughput of all major MNOs along the track at representative times of day. Identify not-spots — tunnels, deep cuttings, rural stretches — where cellular coverage drops completely. This data directly informs the SIM carrier configuration for the aggregation gateways and highlights where lineside infrastructure investment may be justified.

Step 2: Hardware Procurement and Installation

Select EN 50155-compliant hardware from vendors with proven railway deployments. Install the multi-SIM aggregator in a secure, ventilated communications cabinet, typically in the lead or trailing carriage. Run resilient cabling — dual redundant Ethernet rings using industrial-grade cable — through the carriages to the APs. Ensure exterior antennas are aerodynamically profiled and sealed to IP67 or higher against weather ingress.

Step 3: Captive Portal and Bandwidth Management Configuration

This is the critical integration point where infrastructure meets the passenger experience. You cannot offer unrestricted bandwidth on a train; the backhaul is a finite, shared resource. Implement a captive portal solution to enforce Fair Usage Policies (FUP).

Rate Limiting caps individual user speeds — typically 5 Mbps download — to ensure equitable access across all connected devices. Traffic Shaping blocks or throttles high-bandwidth applications such as 4K streaming or large software updates, prioritising web browsing, email, and VoIP. Authentication via the portal captures passenger data (email address, social login) in full compliance with GDPR, feeding this into your analytics platform.

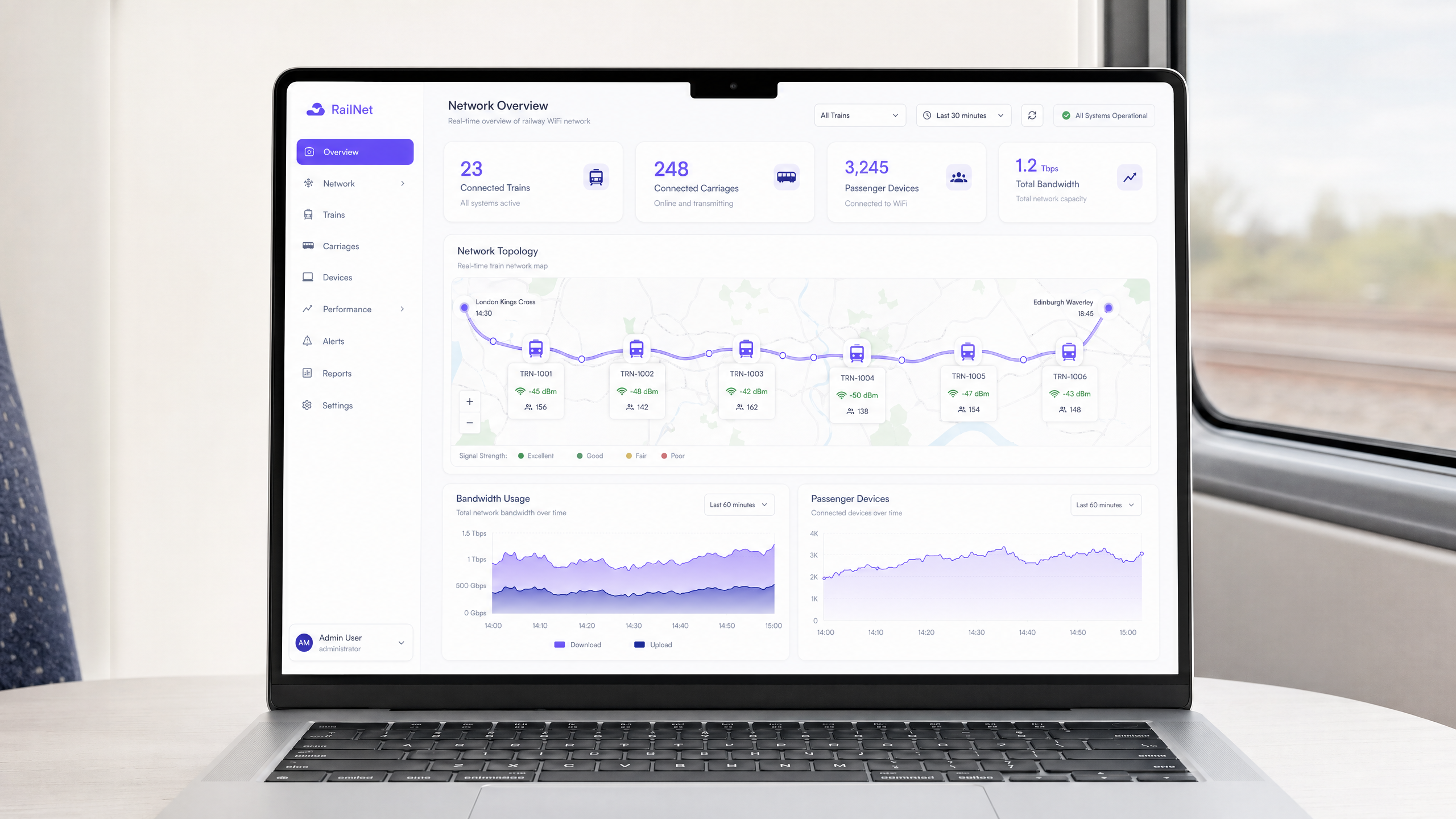

Step 4: NOC Integration and Monitoring

Integrate the onboard network with a cloud-based Network Operations Centre (NOC). Configure real-time alerting for AP health, backhaul latency thresholds, and SIM failover events. Overlay GPS train position data with network performance metrics to create a route-level signal quality map. This is the foundation for proactive management rather than reactive complaint handling.

Best Practices

Implement Client Isolation on all APs. Ensure that passenger devices cannot communicate directly with each other on the local network. This mitigates the risk of peer-to-peer attacks, man-in-the-middle exploits, and malware propagation across the onboard LAN. This is a non-negotiable security baseline for any public network.

Embrace OpenRoaming to reduce portal friction. To improve the passenger experience for repeat travellers, support Passpoint and OpenRoaming (IEEE 802.11u). This allows compatible devices to authenticate securely and automatically without interacting with a captive portal on every journey. Purple acts as a free identity provider for OpenRoaming services, making this a viable upgrade path for operators already using the platform. For further context on network security fundamentals, see Protect Your Network with Strong DNS and Security .

Proactive monitoring is non-negotiable. Do not rely on passenger complaints to identify outages. Integrate the onboard network with a cloud NOC to monitor uptime, backhaul latency, and AP health in real-time. The goal is to identify and resolve issues before the first passenger notices.

Treat the captive portal as a product, not a utility. The portal is your primary touchpoint with the passenger. Invest in a branded, fast-loading experience that clearly communicates the terms of service and data usage. A poorly designed portal creates friction and reduces authentication rates, directly impacting the quality of your first-party data.

Troubleshooting & Risk Mitigation

The Station Surge Effect

The Risk: When a train pulls into a busy station, hundreds of onboard devices may simultaneously attempt to connect to the station's macro-cellular network or the station's own public WiFi, causing severe interference, backhaul saturation, and a degraded experience for all passengers.

Mitigation: Configure the onboard APs to dynamically switch their backhaul from the cellular network to a dedicated high-capacity WiFi or fibre link at the station platform. Use geolocation or GPS triggers to automatically adjust bandwidth policies when the train is stationary at a major hub, temporarily lifting per-user caps when the backhaul capacity is effectively unlimited.

Inter-Carriage Cabling Failures

The Risk: The physical connections between carriages are subjected to constant mechanical stress, vibration, and movement during coupling and decoupling operations, leading to cable degradation and network segmentation.

Mitigation: Implement a redundant ring topology for the onboard LAN using EN 50155-compliant switches with Rapid Spanning Tree Protocol (RSTP) or a proprietary ring protocol. If a cable breaks between any two carriages, traffic automatically routes in the opposite direction around the ring, maintaining connectivity for all APs within seconds.

Backhaul Saturation During Tunnel Egress

The Risk: When a train exits a long tunnel, all devices simultaneously attempt to re-synchronise data (emails, app updates, cloud backups), creating a burst of traffic that saturates the backhaul for 30 to 60 seconds.

Mitigation: Implement aggressive traffic shaping policies that specifically throttle background application traffic. Configure the captive portal to deprioritise OS update traffic and cloud sync services at the application layer, ensuring interactive traffic (web browsing, messaging) is always prioritised.

ROI & Business Impact

While deploying a railway WiFi network requires significant capital expenditure — typically £50,000 to £200,000 per train depending on the complexity of the backhaul solution — it offers substantial and measurable returns when integrated with a robust analytics platform.

| Value Driver | Mechanism | Measurable Outcome |

|---|---|---|

| First-Party Data Acquisition | Captive portal authentication | Passenger email database for CRM and marketing |

| Operational Intelligence | NOC analytics + GPS overlay | Carrier SLA accountability, coverage gap identification |

| Retail Media Revenue | Captive portal advertising | Direct revenue from sponsored content at login |

| Passenger Satisfaction | Reliable connectivity | Improved NPS scores, increased rail mode share |

| Regulatory Compliance | GDPR-compliant data capture | Reduced legal risk, auditable consent records |

By requiring authentication via a captive portal, operators build a valuable database of passenger demographics and travel habits. This data can be used for targeted marketing campaigns, loyalty programmes, and service personalisation. Analytics dashboards that overlay network performance with train location data allow operators to pinpoint trackside coverage gaps and hold cellular providers accountable to contracted SLAs.

The captive portal itself is prime digital real estate. Operators can inject targeted advertisements or sponsored messages into the login flow, generating direct revenue to offset infrastructure costs. This model is highly successful in other sectors, including Retail and Transport hubs, and the same principles apply directly to the railway environment. For operators in the hospitality sector managing station hotels or lounges, the same platform principles apply — see our guide on Hospitality WiFi deployments for parallel implementation patterns.

Key Terms & Definitions

Multi-Bearer Aggregation

The process of combining multiple network connections — typically several 4G or 5G SIM cards from different carriers — into a single, robust data connection using a bonding gateway to improve aggregate bandwidth and provide automatic failover.

Essential for trains, as it prevents network dropouts when passing through areas where a single cellular provider lacks coverage. The gateway dynamically routes packets across all available bearers in real-time.

EN 50155

An international standard (IEC 60571) covering electronic equipment used on rolling stock for railway applications, specifying requirements for temperature, humidity, vibration, shock, and power supply fluctuations.

IT teams must ensure all onboard routers, switches, and APs are EN 50155 certified. Standard enterprise hardware will fail in the railway environment due to vibration and temperature extremes.

Captive Portal

A web page that the user of a public-access network is obliged to view and interact with before full internet access is granted. It typically requires authentication and acceptance of terms of service.

Used by operators to authenticate users, enforce fair usage policies, and capture valuable first-party marketing data. It is the primary commercial interface between the operator and the passenger on the WiFi network.

Client Isolation

A security feature on wireless access points that prevents connected devices from communicating directly with each other on the local network, forcing all traffic through the gateway.

Critical for public networks like train WiFi to protect passengers from peer-to-peer hacking attempts, man-in-the-middle attacks, and malware propagation across the onboard LAN.

Lineside Infrastructure

Dedicated telecommunications equipment — including antennas, radio units, and fibre backhaul — installed along the railway track to provide a private, high-capacity backhaul network for the trains.

Deployed when public cellular networks cannot handle the high data demands of dense commuter routes. Requires significant capital investment but offers guaranteed throughput independent of public network congestion.

Passpoint / OpenRoaming

A protocol suite (based on IEEE 802.11u and Hotspot 2.0) that allows devices to automatically and securely connect to participating WiFi networks without requiring a captive portal login, using certificate-based authentication.

Improves the passenger experience for repeat travellers by providing seamless, automatic connectivity. Purple acts as an identity provider for this service, enabling operators to offer it without building their own authentication infrastructure.

Traffic Shaping (QoS)

The practice of regulating network data transfer to control bandwidth allocation, prioritise certain types of traffic, and block or throttle others, ensuring a defined quality of service for all users.

Used on trains to block high-bandwidth applications (like 4K video streaming) and prioritise interactive traffic (web browsing, email, VoIP) to ensure all passengers have a usable connection despite finite backhaul capacity.

Doppler Shift

The change in frequency of a radio wave as perceived by a receiver that is moving relative to the transmitter. At high speeds, this frequency shift can degrade the quality of the radio link.

A fundamental physical challenge in high-speed rail networking. Specialised track-to-train radio equipment is required to compensate for Doppler shift at speeds above 100 mph, making standard enterprise outdoor APs unsuitable for lineside deployment.

Fair Usage Policy (FUP)

A set of rules enforced by the network operator that limits the bandwidth or data consumption of individual users to ensure equitable access for all connected devices.

Implemented via the captive portal and traffic shaping engine on the multi-SIM aggregator. Without an FUP, a small number of heavy users can saturate the entire backhaul, degrading the experience for all passengers.

Case Studies

A regional rail operator with 50 trains is experiencing severe WiFi complaints. Passengers report the network drops out completely during a 15-minute stretch of the journey through a rural valley. The current setup uses a single-SIM 4G router in each carriage. What is the recommended remediation approach?

The operator must upgrade to a multi-bearer architecture. Step 1: Replace the single-SIM routers with a centralised EN 50155-compliant multi-SIM aggregation gateway per train. Step 2: Conduct an RF survey of the valley to determine which MNOs have partial coverage in the affected segment. Step 3: Provision the gateway with SIMs from at least three different MNOs (e.g., EE, O2, Vodafone), configuring the gateway for packet-level bonding and seamless failover. Step 4: Implement a captive portal to enforce a strict 2 Mbps per-user rate limit during the low-coverage valley segment to prevent connection timeouts for basic web browsing. Step 5: Integrate with a cloud NOC to monitor the failover events in real-time and build a coverage map for carrier negotiations.

A major intercity operator is launching a new premium service and wants to offer a differentiated WiFi experience: first-class passengers get 20 Mbps uncapped, while standard-class passengers receive 5 Mbps with streaming blocked. How should this be architected?

This requires a multi-SSID architecture with per-SSID QoS policies. Step 1: Configure two separate SSIDs on the onboard APs — one for first class, one for standard class. Step 2: Assign each SSID to a separate VLAN. Step 3: On the multi-SIM aggregator, configure per-VLAN traffic shaping policies: VLAN 10 (first class) receives priority queuing with no application-layer blocking; VLAN 20 (standard class) receives a 5 Mbps per-user cap with Deep Packet Inspection (DPI) rules blocking known streaming service domains and IP ranges. Step 4: Deploy separate captive portal instances for each SSID, with the first-class portal pre-populated for frequent travellers via OpenRoaming or a loyalty programme token.

Scenario Analysis

Q1. You are designing the onboard LAN for a new fleet of 8-carriage trains. The project manager suggests daisy-chaining the APs via standard Cat6 cable between carriages to reduce cost. What is the primary risk of this approach, and what architecture should you recommend instead?

💡 Hint:Consider the physical environment of a moving train and what happens to network segments downstream of a broken inter-carriage cable.

Show Recommended Approach

The primary risk is a cascading single point of failure. If the cable between Carriage 3 and Carriage 4 breaks due to vibration or mechanical stress during coupling, Carriages 4 through 8 lose all network connectivity. I would recommend a redundant ring topology using EN 50155-compliant managed switches with M12 connectors and RSTP or a proprietary ring protocol. In a ring topology, a break in any single cable segment is automatically bypassed within milliseconds by routing traffic in the opposite direction around the ring, maintaining connectivity for all APs.

Q2. Your analytics dashboard shows that total bandwidth on the 08:00 commuter service is maxing out the multi-SIM backhaul, causing widespread complaints about slow speeds. However, only 30% of passengers have authenticated on the captive portal. What is the likely cause and what is the solution?

💡 Hint:Think about what devices do in the background when they detect a known or open WiFi network, even before a user actively browses.

Show Recommended Approach

The most likely cause is background device activity: OS updates, cloud backups (iCloud, Google Drive), app refresh cycles, and email sync all initiate automatically as soon as a device associates with the SSID, regardless of whether the user has authenticated through the captive portal. The solution is to implement strict pre-authentication walled gardens on the captive portal — only allowing access to the portal itself before login — combined with post-authentication traffic shaping that blocks known update server IP ranges and CDN domains during peak hours. Per-user rate limiting should also be applied immediately post-authentication.

Q3. A train operator wants to deploy dedicated lineside track-to-train infrastructure to bypass public cellular networks entirely. Their procurement team has identified a low-cost option using standard enterprise outdoor WiFi access points mounted on poles at 200-metre intervals along the track. The trains travel at 125 mph. Why will this approach fail, and what should they specify instead?

💡 Hint:Consider both the physics of high-speed radio communication and the operational requirements of handoff between access points.

Show Recommended Approach

This approach will fail for two fundamental reasons. First, standard enterprise outdoor APs are not designed to handle the rapid handoffs required when a train is moving at 125 mph — at that speed, the train passes a 200-metre cell in under 4 seconds, far faster than standard 802.11 roaming protocols can execute a clean handoff. Second, the Doppler shift effect at those speeds will degrade the radio link quality, as standard APs cannot compensate for the frequency shift caused by the relative velocity between the train and the fixed antenna. The operator must specify dedicated track-to-train radio equipment from vendors with proven high-speed railway deployments, using technologies specifically designed for mobility scenarios, with directional antennas and proprietary handoff protocols optimised for train speeds.

Q4. A passenger rail operator is preparing for a GDPR audit. Their captive portal collects email addresses and uses them for marketing. What are the three most critical compliance requirements they must demonstrate?

💡 Hint:Focus on the lawful basis for processing, the right to withdraw consent, and data retention.

Show Recommended Approach

The three most critical requirements are: 1) Lawful basis and explicit consent — the portal must present a clear, unbundled consent checkbox for marketing communications that is not pre-ticked and is separate from the terms of service acceptance required for WiFi access. Passengers must be able to access WiFi without consenting to marketing. 2) Right to withdraw — there must be a clear, accessible mechanism for passengers to withdraw their marketing consent at any time, typically an unsubscribe link in every email and a self-service preference centre. 3) Data retention and minimisation — the operator must have a documented data retention policy specifying how long passenger data is held, and must be able to demonstrate that data is deleted or anonymised after the retention period. All three must be evidenced with audit logs.