EU AI Act and Guest WiFi: What Marketers Need to Know

The EU AI Act (Regulation 2024/1689) introduces a risk-based framework that directly affects how venue operators deploy AI-driven WiFi marketing, captive portals, and guest analytics. This guide maps the Act's four risk tiers against real-world Guest WiFi use cases, identifies prohibited practices including emotion inference and social scoring, and provides actionable compliance steps for IT teams and marketing directors operating across hospitality, retail, events, and public-sector environments. Understanding where your deployment sits on the risk spectrum — and implementing the Article 50 transparency obligations for AI chatbots and conversational portals — is no longer optional: prohibited practice enforcement began in February 2025.

🎧 Listen to this Guide

View Transcript

- Executive Summary

- Technical Deep-Dive

- The Four-Tier Risk Framework

- Prohibited Practices Under Article 5

- High-Risk Systems Under Annex III

- Article 50 Transparency Obligations — The Immediate Priority

- The AI Act and GDPR: A Stacked Compliance Framework

- Implementation Guide

- Step 1: Build Your AI Inventory

- Step 2: Classify Each System Against the Risk Tiers

- Step 3: Implement Article 50 Disclosures

- Step 4: Review Vendor Sub-Processor Agreements

- Step 5: Align with GDPR Governance

- Step 6: Plan for High-Risk System Compliance (August 2026 Deadline)

- Best Practices

- Troubleshooting & Risk Mitigation

- ROI & Business Impact

- Listen: EU AI Act and Guest WiFi Podcast

Executive Summary

The EU AI Act (Regulation 2024/1689) is the world's first comprehensive legal framework for artificial intelligence, and it applies directly to how venue operators deploy AI across Guest WiFi infrastructure. The Act classifies AI systems into four risk tiers — Prohibited, High Risk, Limited Risk, and Minimal Risk — and assigns compliance obligations accordingly. For most hospitality and retail operators, the immediate operational impact falls into two areas: first, ensuring that any AI-driven conversational interface on a captive portal carries a clear Article 50 transparency disclosure; and second, auditing existing marketing stacks to confirm they do not use prohibited practices such as emotion inference, social scoring, or biometric categorisation based on sensitive attributes.

The prohibited practice provisions under Article 5 became enforceable in February 2025. High-risk system obligations under Annex III apply from August 2026. Fines for prohibited practice violations reach up to €35 million or 7% of global annual turnover. This guide provides a technical reference for IT managers, network architects, and compliance leads who need to assess their current deployments and implement the required changes this quarter.

Technical Deep-Dive

The Four-Tier Risk Framework

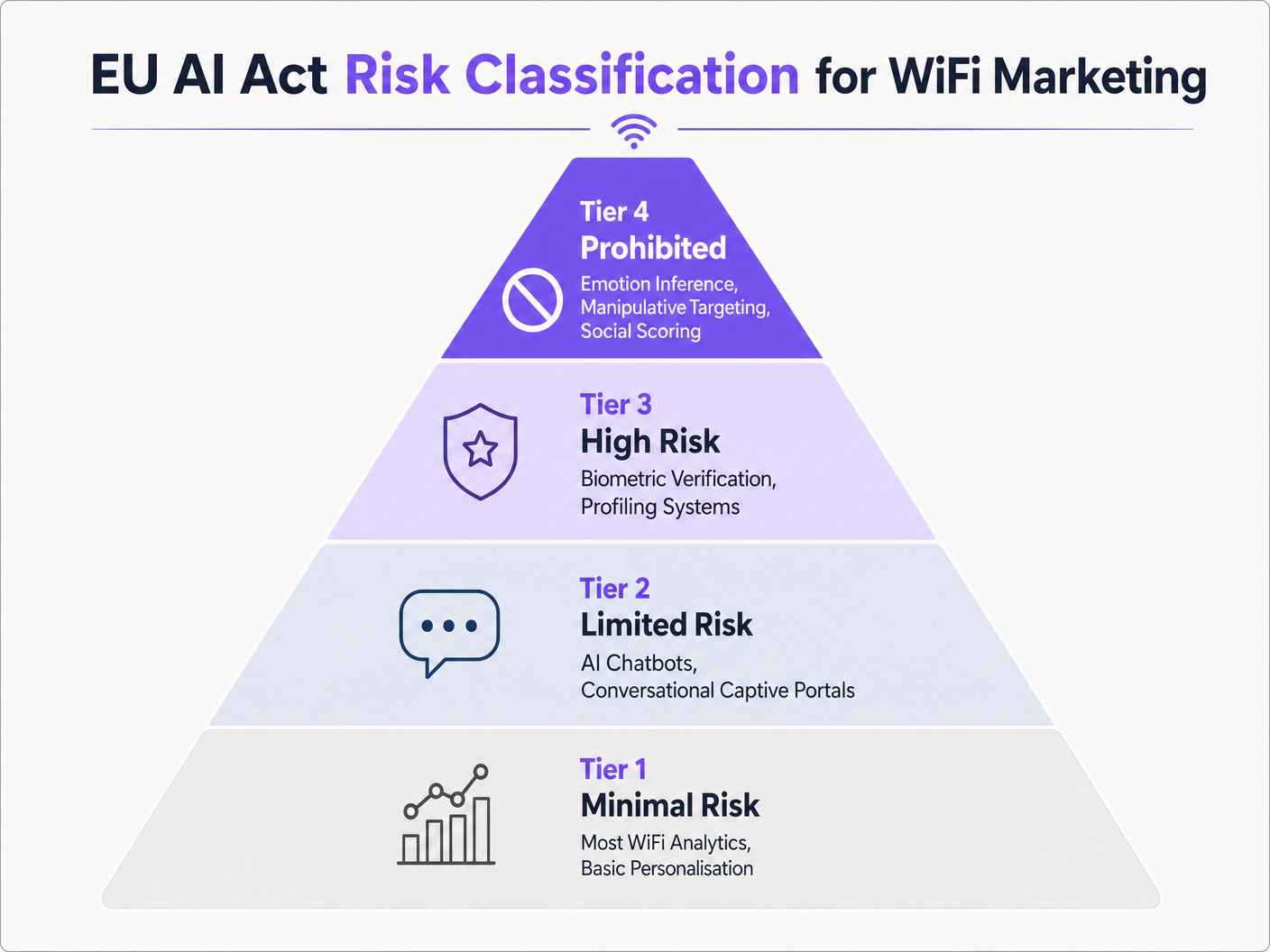

The EU AI Act classifies AI systems by the risk they pose to fundamental rights, safety, and democratic values. The classification determines the compliance obligations that apply to both the provider (the developer or vendor of the AI system) and the deployer (the organisation putting the system into service — typically the venue operator or IT team).

The four tiers, mapped to Guest WiFi and venue marketing contexts, are as follows:

| Risk Tier | AI Act Reference | WiFi Marketing Examples | Compliance Obligation |

|---|---|---|---|

| Prohibited | Article 5 | Emotion inference on portal interactions; social scoring of guests; biometric categorisation by race/religion | Immediate cessation; no deployment permitted |

| High Risk | Annex III | Biometric verification at captive portal; AI profiling for access to essential services | Conformity assessment, technical documentation, risk management system, EU database registration |

| Limited Risk | Article 50 | AI chatbots on captive portals; generative AI splash pages; emotion recognition systems (non-prohibited contexts) | Transparency disclosure to end users before/during interaction |

| Minimal Risk | No specific obligation | Aggregate footfall analytics; dwell-time heatmaps; rules-based personalisation; bandwidth optimisation AI | No AI Act-specific obligations (GDPR still applies) |

Prohibited Practices Under Article 5

Article 5 of the AI Act defines eight categories of prohibited AI practice. Three are directly relevant to venue WiFi marketing deployments.

Manipulative and Deceptive Techniques. The Act prohibits AI systems that deploy subliminal, manipulative, or deceptive techniques to distort a person's behaviour and impair their ability to make an informed decision, where this causes or is likely to cause significant harm. In a WiFi marketing context, this targets systems that exploit behavioural signals captured at the captive portal — click hesitation, scroll patterns, time-on-page — to infer psychological vulnerabilities and serve manipulative offers. The key threshold is significant harm; regulators will assess this contextually, but the principle is clear: AI-driven nudging that bypasses rational agency is out of scope.

Social Scoring. The Act prohibits AI systems that evaluate or classify individuals based on their social behaviour or personal characteristics, where this leads to detrimental or unfavourable treatment. A WiFi loyalty system that uses an AI model to score guests on behavioural patterns — visit frequency, dwell time, purchase signals — and then restricts access speed or withholds offers from lower-scoring guests would fall within this prohibition. The distinction between permissible personalisation and prohibited social scoring lies in whether the AI classification produces detrimental treatment: serving a premium guest a better offer is personalisation; denying a lower-scoring guest access to services is social scoring.

Biometric Categorisation of Sensitive Attributes. The Act prohibits AI systems that use biometric data to infer sensitive attributes including race, political opinion, trade union membership, religious or philosophical beliefs, sex life, or sexual orientation. This is particularly relevant for venues using camera-based analytics alongside WiFi data. If an AI system cross-references device MAC address data with visual analytics to infer ethnicity and personalise content accordingly, that is a direct Article 5 violation. The prohibition applies regardless of whether the biometric data is processed in real time or in batch.

Emotion Inference — Scope Clarification. The Act prohibits emotion inference in workplaces and educational institutions. This prohibition does not automatically extend to retail venues, hotels, or stadiums in relation to guests. However, if your venue is also a workplace — a corporate campus, a co-working space, a hospital — and you are using emotion inference on employees connected to the guest WiFi, that is prohibited. Venue operators should map their user populations carefully before assuming the emotion inference prohibition does not apply.

High-Risk Systems Under Annex III

Annex III of the Act lists use cases that are classified as high-risk. For Guest WiFi deployments, two categories are directly relevant.

First, biometric systems: remote biometric identification systems (excluding simple biometric verification that confirms a person is who they claim to be) and biometric categorisation systems inferring sensitive or protected attributes are high-risk. If your captive portal uses facial recognition to authenticate returning guests, that system requires a full conformity assessment, technical documentation, a risk management system throughout the system's lifecycle, and registration in the EU AI Act database.

Second, individual profiling: any AI system listed under Annex III is always considered high-risk if it profiles individuals — defined as automated processing of personal data to assess aspects of a person's life including preferences, interests, behaviour, and location or movement. This is the provision most likely to catch WiFi Analytics platforms that build persistent individual profiles feeding into automated marketing decisions. The key question is whether the AI system makes or substantially influences automated decisions about individual guests based on their profiled characteristics.

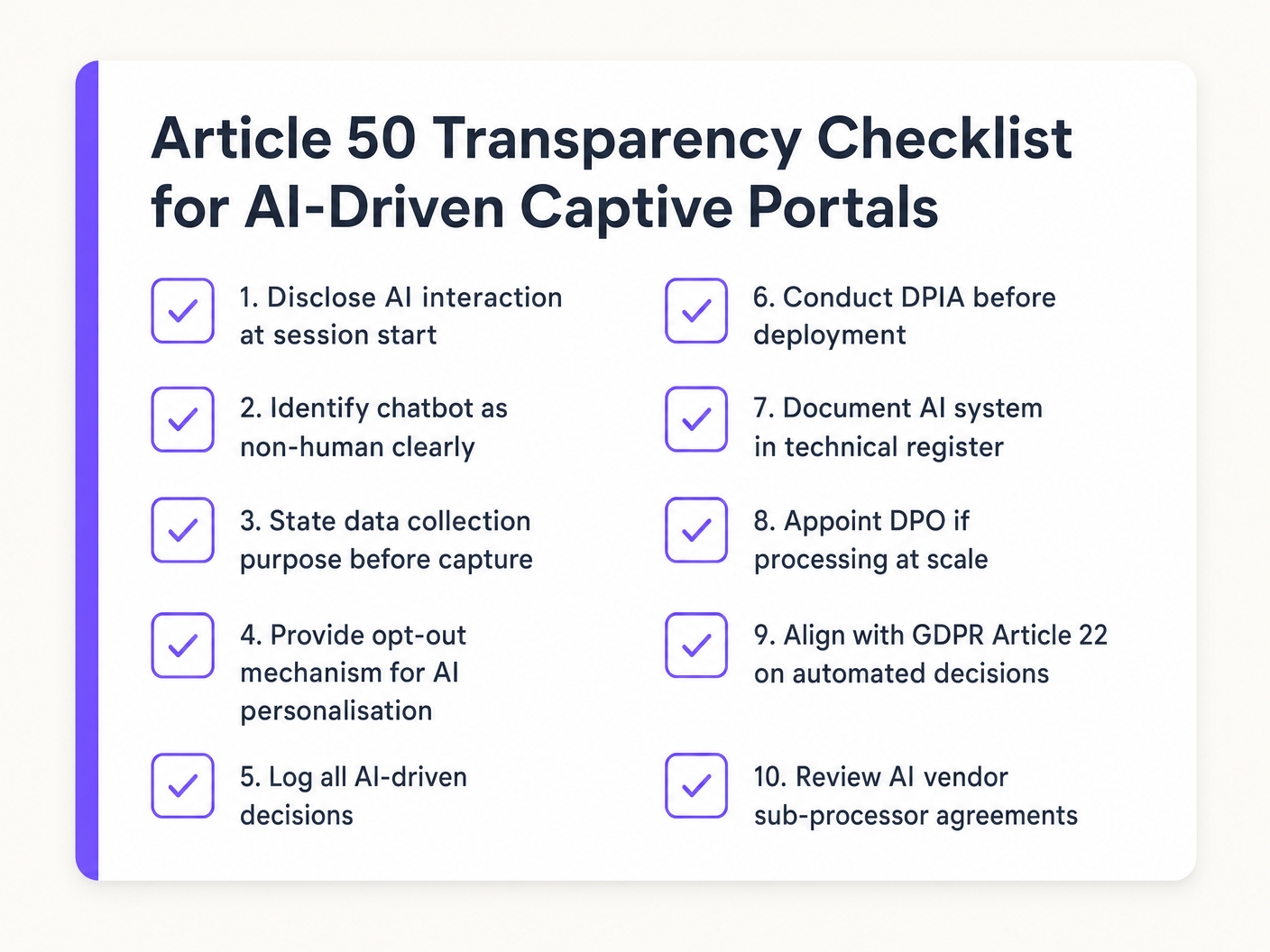

Article 50 Transparency Obligations — The Immediate Priority

For the majority of venue operators today, Article 50 is the most operationally relevant provision. It covers three scenarios:

Conversational AI systems (Article 50(1)): Providers must ensure that AI systems intended to interact with natural persons are designed so that those persons are informed they are interacting with an AI system, unless this is obvious from the context. Deployers must ensure this disclosure is in place. This applies to any AI chatbot deployed on a captive portal — whether for guest services, hotel check-in assistance, venue navigation, or marketing queries.

Emotion recognition and biometric categorisation (Article 50(3)): Deployers of emotion recognition systems or biometric categorisation systems must inform the natural persons exposed to those systems. This is a separate obligation from the chatbot disclosure and applies even where the system is not prohibited.

Synthetic content (Article 50(4)): AI systems generating synthetic audio, image, video, or text content must mark that content as AI-generated. If your captive portal uses generative AI to produce personalised welcome messages or promotional copy, that content must be labelled.

The AI Act and GDPR: A Stacked Compliance Framework

The AI Act does not replace GDPR; it operates in parallel. For venue operators, this means compliance obligations from both frameworks apply simultaneously to AI-driven WiFi marketing deployments.

Under GDPR, the relevant provisions for AI-driven WiFi marketing include: Article 6 (lawful basis for processing), Article 9 (special category data — relevant if biometric data is processed), Article 13/14 (transparency obligations in privacy notices), Article 22 (restrictions on automated individual decision-making), and Article 35 (Data Protection Impact Assessment for high-risk processing).

The AI Act adds: Article 5 (prohibited practice compliance), Article 50 (transparency disclosures at the point of AI interaction), and — for high-risk systems — Articles 8–17 (risk management, technical documentation, conformity assessment, registration).

Where GDPR requires a DPIA for high-risk data processing, the AI Act requires a risk management system for high-risk AI systems. These can and should be aligned: a single integrated assessment covering both the data processing risks (GDPR) and the AI system risks (AI Act) is more efficient and demonstrates a mature governance posture to regulators.

GDPR Article 22 is particularly relevant for AI-driven captive portals. It restricts solely automated decision-making that produces legal or similarly significant effects on individuals. If your AI system makes automated decisions about WiFi access tiers, promotional eligibility, or service quality without human oversight, you need to assess whether Article 22 applies and whether you must provide guests with the right to request human review.

Implementation Guide

Step 1: Build Your AI Inventory

Before you can assess compliance, you need a complete picture of every AI system in your WiFi marketing stack. This means going beyond your own deployments to include AI components embedded in third-party platforms — marketing automation tools, analytics dashboards, captive portal vendors, and CRM integrations.

For each system, document: the system's function; the data it processes; the provider and any sub-processors; the risk tier under the AI Act; and the applicable compliance obligations. This inventory is the foundation of your AI Act compliance posture and will be required if regulators request evidence of due diligence.

Step 2: Classify Each System Against the Risk Tiers

Apply the four-tier framework to each system in your inventory. The classification questions are:

- Does the system use any practice listed in Article 5? If yes, it is prohibited — cease deployment.

- Is the system used for biometric verification, individual profiling for access to services, or any other Annex III use case? If yes, it is high-risk — begin conformity assessment planning.

- Does the system interact with natural persons conversationally, generate synthetic content, or perform emotion recognition? If yes, it is limited-risk — implement Article 50 disclosures.

- None of the above? It is minimal-risk — no AI Act-specific obligations, but GDPR compliance remains mandatory.

Step 3: Implement Article 50 Disclosures

For any AI chatbot or conversational interface on your captive portal, implement a clear disclosure before the interaction begins. The disclosure must be explicit — not implied, not buried in terms and conditions. A simple UI element stating "You are chatting with an AI assistant" at the start of the session satisfies the obligation. This is a front-end change, not a system rebuild, and should be deployable within a single sprint.

For emotion recognition systems operating in your venue (where not prohibited), add a visible notice in the area of operation informing guests that an emotion recognition system is in use.

Step 4: Review Vendor Sub-Processor Agreements

As the deployer, you share liability for prohibited practices used by your vendors. Review your contracts with WiFi marketing platform providers, analytics vendors, and captive portal suppliers. Request explicit confirmation of their AI Act classification and compliance documentation. Add contractual provisions requiring vendors to notify you of any changes to their AI systems that may affect the risk classification.

Step 5: Align with GDPR Governance

Bring your Data Protection Officer into the AI Act compliance process. Update your Record of Processing Activities to include AI system classifications. Where a DPIA is required under GDPR for high-risk data processing, extend it to cover AI Act risk management requirements. Ensure your privacy notices are updated to reflect AI-driven processing and the Article 50 disclosures.

Step 6: Plan for High-Risk System Compliance (August 2026 Deadline)

If any of your systems are classified as high-risk, begin the conformity assessment process now. The August 2026 deadline for Annex III systems is closer than it appears when you factor in the time required for technical documentation, risk management system implementation, and EU database registration. Engage your vendors early to understand what documentation they can provide and what you need to produce as the deployer.

Best Practices

Adopt a Privacy-by-Design approach to AI deployment. The AI Act's requirements for high-risk systems — risk management throughout the lifecycle, data governance, technical documentation — are most efficiently met when built into the system architecture from the outset rather than retrofitted. When evaluating new AI-driven marketing tools, include AI Act compliance requirements in your procurement criteria alongside GDPR compliance and security standards such as ISO 27001 and PCI DSS.

Prefer first-party, consent-based data over inferred attributes. The Act's prohibited practices and high-risk classifications are primarily targeted at AI systems that infer sensitive characteristics or make significant automated decisions about individuals. Systems that use explicitly consented, first-party data — email addresses, declared preferences, loyalty programme membership — to drive personalisation are at significantly lower regulatory risk than systems that infer characteristics from behavioural signals.

Maintain a separation between network operations AI and marketing AI. AI systems used for network management — bandwidth allocation, interference mitigation, load balancing — are minimal risk under the Act. AI systems used for guest profiling and marketing personalisation carry higher risk. Keeping these architecturally separate simplifies your risk classification and limits the blast radius of any compliance issue in the marketing stack.

Reference IEEE 802.1X and WPA3 for authentication architecture. Where biometric verification is used at the captive portal, ensure the underlying authentication architecture meets current standards. IEEE 802.1X provides port-based network access control with strong authentication, and WPA3 provides enhanced encryption for the wireless layer. These standards are vendor-neutral and are referenced in both enterprise security frameworks and GDPR guidance on appropriate technical measures.

Document your AI Act compliance decisions. Even for minimal-risk systems, documenting your classification rationale demonstrates due diligence to regulators. The AI Act requires providers of high-risk systems to document their assessment before placing the system on the market; as a deployer, maintaining equivalent documentation for your own risk assessments is best practice.

Troubleshooting & Risk Mitigation

Risk: Vendor AI practices are opaque. Many marketing automation and WiFi analytics platforms embed AI capabilities that are not clearly documented. Mitigation: Issue a formal AI Act compliance questionnaire to all vendors. Request their system classification, technical documentation, and evidence of prohibited practice avoidance. Include AI Act compliance as a contractual requirement in new and renewed agreements.

Risk: Captive portal chatbot lacks Article 50 disclosure. This is the most common compliance gap identified in current deployments. Mitigation: Audit your captive portal UI. If any conversational AI interface lacks a clear pre-interaction disclosure, this is a priority remediation item. The fix is a UI change deployable in days.

Risk: Analytics platform builds individual profiles that trigger high-risk classification. If your WiFi Analytics platform builds persistent individual profiles feeding into automated marketing decisions, you may be operating a high-risk system without the required conformity assessment. Mitigation: Review the platform's data model. If individual profiles are being built and used for automated decisions, engage your vendor on their AI Act classification and initiate a conformity assessment process.

Risk: GDPR and AI Act compliance treated as separate workstreams. Organisations that manage GDPR and AI Act compliance in separate teams risk duplication, gaps, and inconsistent documentation. Mitigation: Establish a unified AI governance framework that addresses both regulatory frameworks. A single integrated DPIA/AI risk assessment process is more efficient and more defensible.

Risk: Misclassification of emotion inference scope. The prohibition on emotion inference applies in workplaces and educational institutions. Venues that are also workplaces — corporate campuses, hospitals, co-working spaces — must apply the prohibition to employee-facing systems, not just guest-facing ones. Mitigation: Map your user populations and apply the prohibition to all contexts where employees may be subject to emotion inference.

ROI & Business Impact

Compliance with the EU AI Act is not purely a cost centre. Organisations that build AI governance frameworks ahead of the enforcement curve gain measurable competitive advantages.

Reduced regulatory risk. The fines for prohibited practice violations — up to €35 million or 7% of global annual turnover — represent a material financial risk for any organisation operating at scale across EU member states. A proactive compliance posture eliminates this exposure.

Vendor differentiation. As AI Act compliance becomes a procurement requirement, platforms that can demonstrate clear risk classification, transparent AI practices, and Article 50-compliant interfaces will be preferred over those that cannot. For hospitality and retail operators evaluating WiFi marketing platforms, AI Act compliance documentation is becoming a standard RFP requirement.

Guest trust and first-party data quality. Transparency obligations under Article 50 — when implemented well — increase guest trust. Guests who understand how AI is being used in their interaction are more likely to engage authentically and provide higher-quality first-party data. This directly improves the accuracy of personalisation models and the ROI of marketing campaigns.

Operational efficiency through unified governance. Organisations that align their GDPR and AI Act compliance frameworks into a single governance structure reduce duplication of effort across legal, IT, and marketing teams. The investment in building this framework pays dividends as the regulatory landscape continues to evolve — the AI Act will be followed by further AI-specific regulation, and a mature governance posture provides a durable foundation.

For transport operators and public-sector organisations, AI Act compliance is particularly important given the heightened scrutiny of AI systems in publicly accessible spaces. Proactive compliance demonstrates accountability to both regulators and the public, supporting broader digital trust objectives.

For further reading on related compliance frameworks, see our guide to PIPEDA Compliance for Guest WiFi in Canada , which covers analogous consent and transparency requirements in the Canadian context.

Listen: EU AI Act and Guest WiFi Podcast

Key Terms & Definitions

Provider (EU AI Act)

A natural or legal person, public authority, agency, or other body that develops an AI system or general-purpose AI model, or that has an AI system or general-purpose AI model developed, with a view to placing it on the market or putting it into service under its own name or trademark, whether for payment or free of charge.

In a Guest WiFi context, the provider is typically the WiFi marketing platform vendor or the developer of the AI personalisation engine. Providers of high-risk systems carry the heaviest compliance obligations under the Act.

Deployer (EU AI Act)

A natural or legal person, public authority, agency, or other body that uses an AI system under its own authority, except where the AI system is used in the course of a personal non-professional activity.

The venue operator — hotel group, retail chain, stadium operator — is the deployer. Deployers are responsible for Article 50 transparency disclosures and for ensuring that the AI systems they use comply with the Act's requirements, even when those systems are provided by third parties.

Biometric Categorisation System

An AI system for the purpose of assigning natural persons to specific categories on the basis of their biometric data, such as face, movement, gait, posture, voice, appearance, behaviour, or other physiological or behavioural human characteristics or traits.

Relevant for venue operators using camera-based analytics or device fingerprinting in combination with AI. Systems that infer sensitive attributes (race, religion, political opinion) from biometric data are prohibited under Article 5. Systems that perform biometric categorisation without inferring sensitive attributes may be high-risk under Annex III.

Emotion Recognition System

An AI system for the purpose of identifying or inferring emotions or intentions of natural persons on the basis of their biometric data.

Prohibited in workplaces and educational institutions under Article 5. In other venue contexts (retail, hospitality), emotion recognition systems are regulated under Annex III as high-risk and require deployers to inform affected persons under Article 50(3). Vendors marketing 'mood-based' or 'engagement state' features should be assessed against this definition.

Individual Profiling

Any form of automated processing of personal data consisting of the use of personal data to evaluate certain personal aspects relating to a natural person, in particular to analyse or predict aspects concerning that natural person's performance, economic situation, health, personal preferences, interests, reliability, behaviour, location or movements.

AI systems listed under Annex III are always considered high-risk if they profile individuals. WiFi analytics platforms that build persistent individual profiles feeding into automated marketing decisions must be assessed against this definition to determine whether they are high-risk systems.

Social Scoring

The evaluation or classification of natural persons or groups of persons over a period of time based on their social behaviour or known, inferred, or predicted personal or personality characteristics, with a social score leading to detrimental or unfavourable treatment of those persons or groups in social contexts that are unrelated to the contexts in which the data was originally generated or collected.

Prohibited under Article 5. In a WiFi marketing context, this targets AI systems that score guests on behavioural patterns and use those scores to restrict access, withhold offers, or provide inferior service. The key element is detrimental treatment — personalisation that improves the experience for high-value guests is not social scoring unless it simultaneously disadvantages lower-scoring guests.

Captive Portal

A web page or authentication gateway presented to newly connected users of a WiFi network before they are granted broader access to the internet. Used by venue operators to collect guest data, present terms of service, and deliver marketing content.

The primary deployment surface for AI-driven WiFi marketing. AI features on captive portals — chatbots, personalised splash pages, recommendation engines — are subject to Article 50 transparency obligations. The captive portal is also the point at which GDPR consent for data processing is typically obtained.

Conformity Assessment

The process of verifying whether a high-risk AI system complies with the requirements set out in the EU AI Act, including risk management, data governance, technical documentation, transparency, human oversight, accuracy, robustness, and cybersecurity.

Required for high-risk AI systems before they are placed on the market or put into service. For most high-risk systems under Annex III, providers can conduct a self-assessment. For biometric identification systems, third-party assessment is required. Venue operators deploying high-risk AI systems need to ensure their vendors have completed the required conformity assessment and can provide the documentation.

DPIA (Data Protection Impact Assessment)

A process required under GDPR Article 35 for processing operations that are likely to result in a high risk to the rights and freedoms of natural persons. The DPIA must describe the processing, assess necessity and proportionality, and identify and mitigate risks.

Required under GDPR for high-risk data processing, including large-scale profiling and systematic monitoring of publicly accessible areas. In the AI Act context, the DPIA should be extended to cover AI system risk management requirements, creating a unified assessment that satisfies both regulatory frameworks.

Article 50 Transparency Obligation

The requirement under Article 50 of the EU AI Act that providers ensure AI systems intended to interact with natural persons are designed so that those persons are informed they are interacting with an AI system, unless this is obvious from the context. Deployers must ensure this disclosure is in place.

The most immediately actionable compliance obligation for venue operators with AI chatbots or conversational interfaces on their captive portals. The disclosure must be clear and upfront — before the interaction begins — not buried in terms and conditions. Applies to all AI conversational systems regardless of risk tier.

Case Studies

A 450-room hotel group operating across five EU member states has deployed an AI-driven chatbot on its captive portal to handle guest check-in queries, restaurant recommendations, and WiFi troubleshooting. The chatbot is powered by a third-party LLM platform. The marketing team also uses a WiFi analytics platform that builds individual guest profiles — including visit history, dwell time by venue area, and inferred demographic segments — to serve personalised promotional offers via the captive portal splash page. The CTO needs to assess the AI Act compliance posture of both systems before the next board meeting.

Step 1 — Classify the chatbot. The AI-driven chatbot is a conversational AI system interacting with natural persons. It falls under Article 50(1) as a Limited Risk system. The immediate action is to implement a clear pre-interaction disclosure on the captive portal UI: 'You are chatting with an AI assistant.' This is a front-end change. The hotel group, as the deployer, is responsible for this disclosure even though the underlying LLM is provided by a third party. Review the vendor contract to confirm the provider's AI Act classification and request their technical documentation.

Step 2 — Classify the analytics platform. The WiFi analytics platform builds individual guest profiles and uses them to serve personalised offers via automated decisions. The key question is whether this constitutes individual profiling under Annex III — automated processing of personal data to assess preferences, interests, behaviour, and location. If yes, the system is high-risk. Request the vendor's AI Act classification documentation. If the vendor classifies the system as minimal risk, obtain their written rationale and assess whether it is defensible. If the system is high-risk, begin planning for conformity assessment compliance ahead of the August 2026 deadline.

Step 3 — Audit the inferred demographic segments. If the analytics platform infers demographic segments that include sensitive attributes — age bracket, gender, nationality — using AI models, assess whether this constitutes biometric categorisation of sensitive attributes under Article 5. If the segmentation is based on declared data (loyalty programme membership, explicitly provided preferences) rather than AI inference from behavioural signals, it is lower risk. If it is AI-inferred from behavioural signals, it requires careful legal review.

Step 4 — Align with GDPR. Ensure the hotel group's privacy notice reflects AI-driven processing and the Article 50 disclosures. Review the lawful basis for the analytics processing under GDPR Article 6. If the processing is based on legitimate interests, conduct a legitimate interests assessment that accounts for the AI Act risk classification. Update the DPIA to cover both GDPR and AI Act risk dimensions.

A national retail chain with 120 stores across Germany, France, and the Netherlands is evaluating a new WiFi marketing platform that includes an AI feature described by the vendor as 'mood-based personalisation' — the system analyses the speed and pattern of a guest's captive portal interactions to infer their 'engagement state' and adjusts the promotional content served on the splash page accordingly. The IT director needs to assess whether this feature is permissible under the EU AI Act.

Step 1 — Identify the AI practice. The 'mood-based personalisation' feature analyses behavioural signals (interaction speed and pattern) to infer an 'engagement state' — which is functionally an emotional or psychological state. This is emotion inference.

Step 2 — Apply the Article 5 prohibition test. Article 5 prohibits emotion inference in workplaces and educational institutions. A retail store is not a workplace for the guest, so this specific prohibition does not apply to the guest population in the retail context. However, the feature may still be prohibited under Article 5(1)(a) if it deploys manipulative techniques to distort behaviour and impair informed decision-making, causing significant harm. The use of inferred emotional state to serve manipulative promotional content — targeting a guest identified as 'frustrated' with an urgency-based offer, for example — is likely to fall within this prohibition.

Step 3 — Assess the GDPR implications. Inferring emotional state from behavioural data constitutes processing of personal data for profiling purposes under GDPR. The lawful basis for this processing must be assessed. Legitimate interests is unlikely to be a defensible basis for emotion inference used for marketing purposes. Explicit consent is the most appropriate basis, but the consent mechanism must be specific and granular — consent to WiFi access does not constitute consent to emotion inference.

Step 4 — Recommendation. Do not deploy the 'mood-based personalisation' feature without a detailed legal assessment. The risk of Article 5 violation — specifically the manipulation prohibition — is material. Request the vendor's legal analysis of the feature's AI Act classification. If the vendor cannot provide a defensible classification, treat the feature as prohibited and do not activate it. Standard behavioural personalisation based on objective metrics (visit frequency, time of day, declared preferences) is permissible and carries significantly lower regulatory risk.

Scenario Analysis

Q1. Your venue's WiFi marketing platform vendor has just released a new feature called 'Visitor Sentiment Scoring' that analyses the speed, sequence, and hesitation patterns of a guest's captive portal interactions to assign a sentiment score (positive, neutral, frustrated) and adjust the promotional content served accordingly. The vendor's documentation describes this as 'behavioural analytics' rather than 'emotion recognition'. As the IT director, how do you assess this feature's EU AI Act compliance status, and what actions do you take?

💡 Hint:Focus on the technical function of the system, not the vendor's marketing language. Ask: what is the system actually doing? Is it inferring an emotional or psychological state from behavioural signals? Then apply the Article 5 prohibition test and the Article 50 transparency test.

Show Recommended Approach

The feature is functionally an emotion recognition system regardless of the vendor's labelling. Analysing interaction patterns to infer 'frustration' or 'positive sentiment' is emotion inference. The first step is to apply the Article 5 prohibition test: is this system being used in a workplace or educational institution? If the venue is a retail store or hotel, the Article 5 workplace prohibition does not apply to guests. However, the manipulation prohibition under Article 5(1)(a) may apply if the system uses the inferred sentiment to serve manipulative content — for example, targeting a 'frustrated' guest with an urgency-based offer. The second step is to assess whether the system falls under Annex III as an emotion recognition system, which would make it high-risk. The third step is to request the vendor's written AI Act classification and legal analysis. If the vendor cannot provide a defensible classification, do not activate the feature. Document your assessment rationale regardless of the outcome.

Q2. A stadium operator running a 60,000-capacity venue uses Purple's Guest WiFi platform to collect first-party data at events. The marketing team wants to deploy an AI chatbot on the captive portal to answer fan queries about facilities, merchandise, and upcoming events. The chatbot is powered by a third-party LLM API. The venue's legal team asks: what are the Article 50 obligations, who is responsible for compliance, and what does the implementation look like in practice?

💡 Hint:Identify the deployer, the provider, and the applicable Article 50 scenario. Then specify what the disclosure must look like and when it must appear.

Show Recommended Approach

The stadium operator is the deployer; the LLM API provider is the provider. Under Article 50(1), the deployer is responsible for ensuring that guests are informed they are interacting with an AI system before the interaction begins. The implementation requires a clear disclosure on the captive portal UI — a badge or introductory message such as 'You are chatting with an AI assistant' — displayed before the first message is sent. This is a front-end change to the captive portal template. The disclosure must be explicit and upfront; it cannot be buried in the terms of service. The LLM provider has their own obligations as a provider (technical documentation, instructions for use), but the deployer cannot rely on the provider to satisfy the deployer's transparency obligations. Additionally, the stadium operator must ensure the chatbot's data processing complies with GDPR — the lawful basis for processing any personal data shared in the conversation must be established, and the privacy notice must reflect the AI-driven processing.

Q3. A retail chain's WiFi analytics platform has been building individual guest profiles for two years, combining WiFi session data (device MAC address, dwell time, visit frequency, location within store) with CRM data (purchase history, loyalty programme tier) to feed an AI model that makes automated decisions about which promotional offers to serve each guest at the captive portal. The chain's new compliance lead has been asked to assess whether this system is high-risk under the EU AI Act's Annex III individual profiling provision. What is the assessment methodology and likely outcome?

💡 Hint:Apply the Annex III individual profiling test: is the AI system performing automated processing of personal data to assess aspects of a person's preferences, interests, behaviour, or location? Then consider whether the automated decisions are significant enough to trigger the high-risk classification.

Show Recommended Approach

The assessment methodology follows three steps. First, confirm that the system is an AI system (not a simple rules-based engine) — if the promotional decision is made by a machine learning model rather than a deterministic rules engine, it is an AI system. Second, apply the Annex III individual profiling test: the system is processing personal data (WiFi session data, CRM data) to assess individual preferences, interests, behaviour, and location. This meets the definition of individual profiling. Third, assess whether the system is listed under Annex III use cases — the most relevant category is 'access to and enjoyment of essential public and private services', which includes AI systems used to evaluate eligibility for services. Whether promotional offer decisions constitute 'access to services' is a grey area; if the AI system can deny a guest access to a promotional offer that materially affects their purchasing decision, regulators may take the view that this is significant. The likely outcome is that the system should be treated as potentially high-risk and a formal assessment conducted. The chain should engage the platform vendor to obtain their AI Act classification, initiate a DPIA/AI risk assessment, and begin planning for conformity assessment compliance ahead of the August 2026 deadline.